A strong patent is not just about writing claims that sound big. It is about proving that your invention truly reaches that far.

That is where many founders get stuck.

They know their product well. They know what they built. They know what makes it special. But when it is time to turn that work into a patent, they often describe only the exact version sitting in front of them today. That feels safe. It feels clear. It feels honest.

It also leaves a lot on the table.

The real goal is not only to protect the one version you already shipped. The real goal is to protect the wider idea behind it, so you are not boxed in later by small wording choices you made too early. That is how patents become useful. That is how they protect growth. That is how they support a startup that plans to move fast.

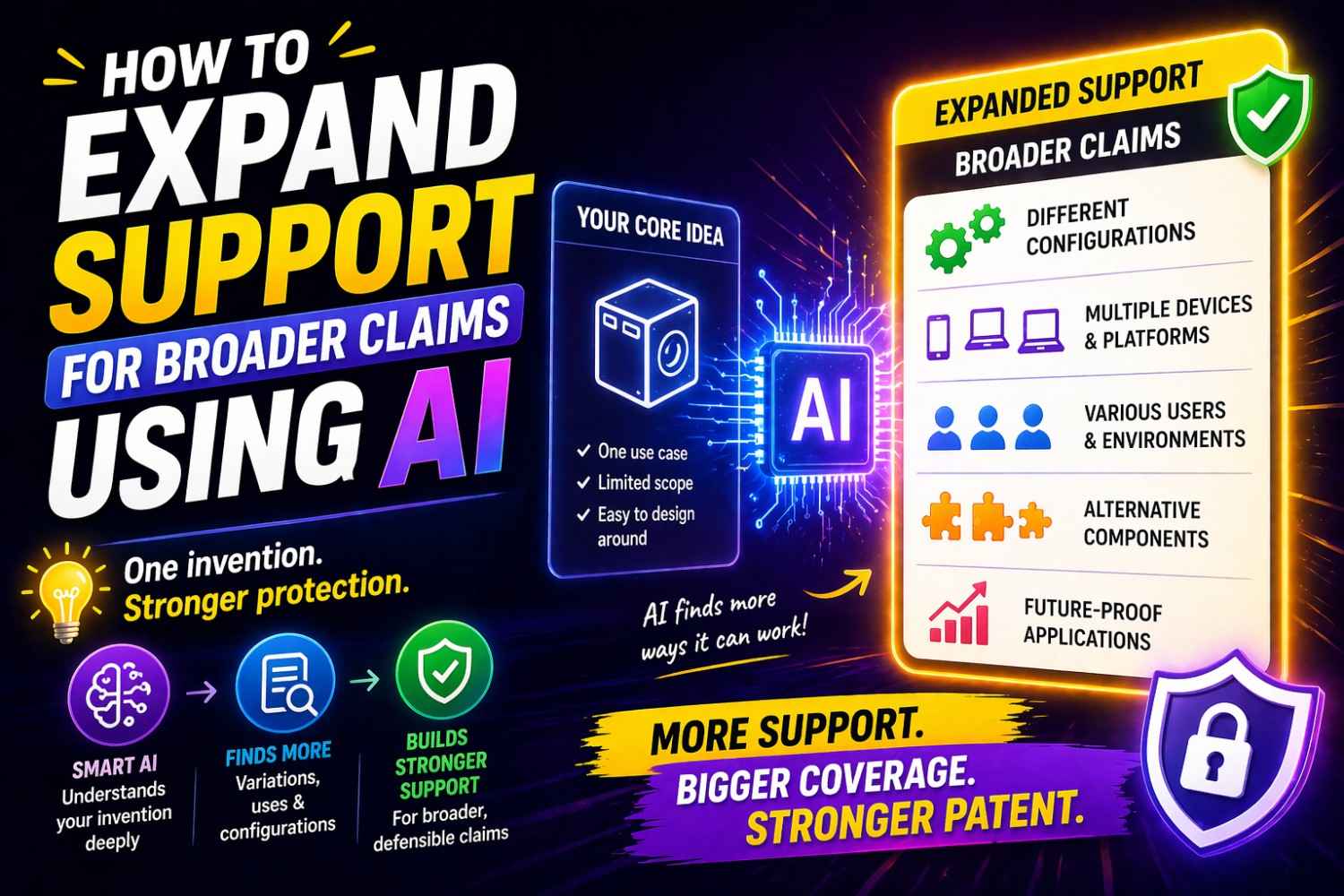

AI can help with this in a very practical way.

Used the right way, AI can help you find more versions, more use cases, more technical paths, more fallback language, and more support in your draft so your broader claims have a stronger base.

It can help you think wider without getting vague. It can help you capture more of the invention before it slips away in your own head. It can help you turn what feels obvious to you into words that actually protect the business.

That does not mean AI replaces legal judgment. It does not. But it can make the whole process much stronger, faster, and much more complete, especially when real patent attorneys review the final work. That is a big part of why modern founders are moving toward software-driven patent workflows instead of slow, old-school patent drafting. You can see how that works here: PowerPatent’s process.

This article will show you how to use AI to expand support for broader claims in a way that is smart, clear, and grounded in real invention detail.

The real problem with broad claims

Broad claims sound great in theory.

Every founder wants them. Every inventor wants language that covers not just one feature, but the full zone around the feature. You want room. You want leverage. You want protection that grows with the company.

The problem is that claims do not stand alone. They need support.

A patent application is not a wish list. It is not a place to say, “We might someday do many other things too,” and hope that works. The broader the claim, the more pressure there is on the written description behind it. If the application only explains one narrow setup, then broad claim language starts to float. It loses weight. It loses support. That is where trouble begins.

Many founders think the hard part is writing broad claims. In truth, the harder part is building a strong enough technical story underneath those claims.

That story is what gives the claims life.

If you only describe one model, one pipeline, one hardware setup, one training method, one data flow, one user flow, one sensor layout, one deployment path, or one exact architecture, then you are silently telling the world that your invention may be limited to that shape. Even if you never meant to do that.

This happens all the time in startups. Teams are busy shipping. They speak in terms of what exists in the repo right now. They describe the current stack. They show the current product. They explain the current workaround. Then the patent draft starts to mirror all of that too closely.

Months later, the company evolves. The product changes. The model changes. The infrastructure changes. The market changes. The claim scope they wanted is now wider than the support they gave themselves.

That gap is painful because it was avoidable.

AI helps close that gap by helping you pull more knowledge out of your own work at the drafting stage. It helps you stop treating the current build as the only version that matters. It helps you see the invention in layers.

That is the core shift.

When you want broader claims, you do not begin by stretching the claims farther and farther. You begin by expanding the support underneath them.

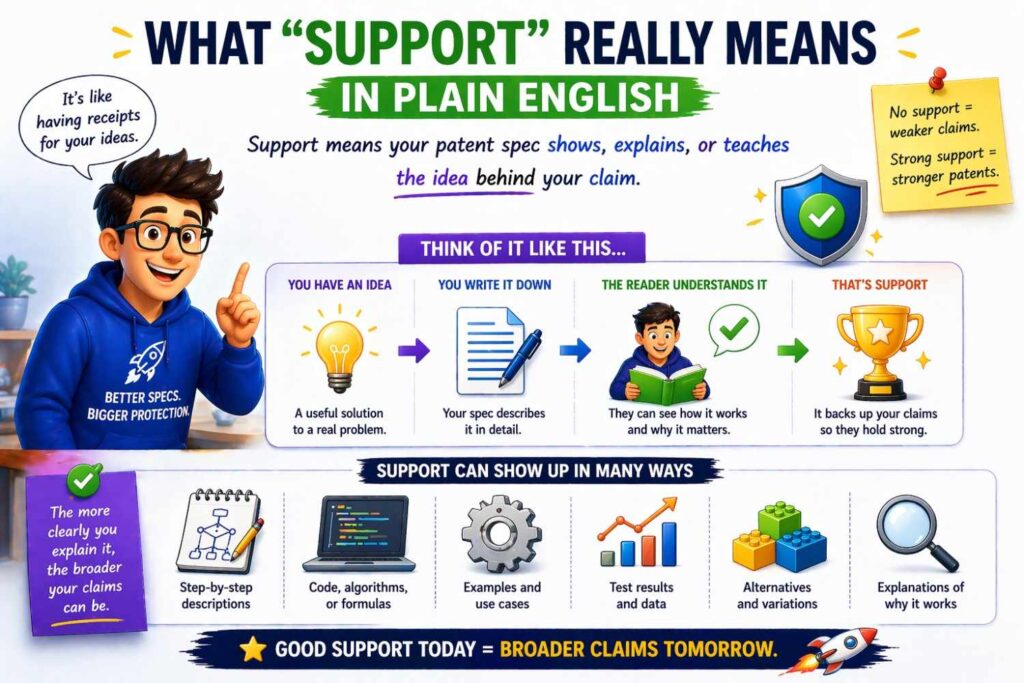

What “support” really means in plain English

Let’s make this simple.

Support means your application gives enough real detail to show that the broader idea is truly part of what you invented. Not as a guess. Not as hand waving. Not as vague future talk. As something connected to the actual invention.

Think of claims like the roof of a house.

The broader the roof, the more structure you need under it.

If the support is thin, the roof sags.

If the support is deep, wide, and well built, the roof can cover more space.

That structure comes from your description, your examples, your variations, your options, your technical paths, your alternate ways of doing the same job, your substitute parts, your different deployment modes, your different data types, your different control flows, your different model choices, your different hardware forms, your different sources of input, your different outputs, and your different system goals.

Support also comes from how you explain the invention at different levels.

There is the very concrete level, where you describe the exact thing your team built.

Then there is the function level, where you describe what that thing is doing.

Then there is the system level, where you describe how pieces work together.

Then there is the concept level, where you describe the broader pattern that makes the invention valuable.

A weak patent draft often stops at the first level. A stronger one moves across all of them.

AI is helpful because it can prompt you to move up and down those levels quickly. It can help you ask, “What else could perform this function?” “What part of this flow is essential?” “What is one layer more general than the current implementation?” “What other environments could use the same idea?” “What happens if this component changes?” “What if the model is replaced?” “What if the data source is different?” “What if the order of operations changes?” “What if this is done on device instead of in the cloud?”

Those questions are not fluff. They are how broader support gets built.

Why founders undersupport their own inventions

This is worth understanding because it happens even to very smart teams.

The first reason is closeness. Founders are too close to the product. They know what matters, but because they know it so well, they often skip over important details without noticing. They say things like, “The model ranks outputs based on context,” because to them that covers it. But there may be ten useful details hiding under that sentence that never make it into the application.

The second reason is speed. Startups move fast. Patent work gets squeezed between shipping deadlines, fundraising, hiring, and customer fires. So people default to the easiest path: describe what already exists and move on.

The third reason is fear of saying too much. Some teams worry that if they add many alternatives, examples, and paths, they will somehow weaken the story. In reality, careful breadth in the description often helps, because it shows the invention is not trapped in one narrow form.

The fourth reason is not knowing how patents really become broad. Many people think breadth lives mostly in the claims. So they focus all their energy there. But the claims only work as far as the support lets them work.

The fifth reason is that many drafting processes are old and passive. A founder fills out a form, has one call, sends over a slide deck, and then waits. That often leads to a draft that is decent but not deep. The process itself does not pull enough out of the invention.

This is where AI can change the game.

AI can act like a tireless technical interviewer. It can pressure test assumptions, suggest overlooked variants, map functions to alternatives, and force a fuller record. It helps founders surface what is already in their heads and code, but not yet on paper.

That is one reason modern systems that combine smart software with real attorney review can be so effective. They help you capture more of the invention early, before the details get buried or forgotten. You can explore that approach here: see how PowerPatent works.

The key mindset shift: stop drafting the product, start drafting the invention space

This one shift alone can improve the quality of a patent draft more than most teams expect.

Do not ask only, “What did we build?”

Ask, “What is the broader invention space we opened up by building this?”

That sounds abstract, but it is actually very practical.

Suppose your team built a system that uses user behavior and account context to predict which support ticket should be auto-escalated. If you draft that too literally, your application may end up being about a support tool that uses a specific ranking model tied to a certain ticket format.

But the actual invention space may be much wider.

Maybe the real invention is using dynamic context from multiple behavior sources to route action priority inside a service system.

Now that can cover tickets, alerts, workflows, fraud reviews, incident response, case triage, internal task routing, or even medical review queues, depending on what the real technical contribution is.

This does not mean you should claim everything under the sun. It means you should identify the real center of the invention, then support multiple ways that center can appear.

AI is very good at helping you make this jump because it can restate your invention at different levels of generality. It can help separate what is essential from what is accidental.

That matters because many narrow details in your current product are not the invention. They are simply one path your team chose.

Your database choice is probably not the invention.

Your exact front-end flow is probably not the invention.

Your current fine-tuned model may not be the invention.

Your current message queue or task scheduler may not be the invention.

Your exact sensor brand is probably not the invention.

Your exact output format may not be the invention.

The invention is usually deeper than that.

AI can help you uncover that deeper layer and then help you write support around it.

The best way to use AI: not as a writer first, but as a technical expander

A lot of people make the same mistake with AI. They ask it to write the patent.

That is usually the wrong first move.

The better first move is to use AI to expand the technical record.

Think of AI as a structured thinking tool before it becomes a drafting tool.

At the start, you want AI to help with discovery. You want it to ask questions, generate variations, test definitions, identify alternatives, and map the invention from multiple sides. You want it to bring out what is missing.

Only after that should you use it to help shape the written material.

This order matters a lot.

If you jump straight to draft text, the AI will often produce language that sounds polished but rests on a thin factual base. You get nice sentences and weak support. That is not the goal.

You want the opposite. You want a rich factual base first. Then the writing becomes powerful because it has something real to stand on.

A simple way to think about it is this:

First use AI to widen your invention map.

Then use AI to deepen each part of the map.

Then use AI to turn that depth into support language.

Then have qualified patent professionals review the output and shape the claims.

That sequence is much stronger than asking for a finished draft on day one.

Start with the exact version you built

It may sound strange, but broader support starts by getting very clear on the narrow version.

You cannot generalize well if the starting point is fuzzy.

So begin with the real implementation. Describe it plainly. What does the system do? What problem does it solve? What are the main parts? What data goes in? What happens to that data? What decisions are made? What outputs come out? Where does the system run? What triggers it? What changes because of it?

This is where founders should not hold back.

Explain the current architecture. Explain the workflow. Explain the model behavior. Explain the edge cases. Explain what was hard. Explain what was slow before. Explain what improved. Explain what the system does when inputs are missing. Explain what happens when confidence is low. Explain how the result is presented or acted on.

AI can help by interviewing you here.

You can ask it to pull out all technical steps from your explanation. You can ask it to identify hidden assumptions. You can ask it to convert a messy product explanation into a clearer system flow. You can ask it to tell you which pieces sound essential and which sound like one implementation choice.

That last point is very important.

Once the exact version is clear, the work of expanding support gets easier because you can see what can vary.

Too many patent efforts skip this stage. People rush to general words too early. That creates weak abstraction. Good abstraction grows out of concrete detail, not instead of it.

Then ask the most valuable question: what could change without changing the core idea?

This question opens the door to broader support.

Take each major part of the current system and ask what could change while the invention still works in spirit.

Could the input type change?

Could the model type change?

Could the ranking logic change?

Could the storage method change?

Could the order of operations change?

Could the signal source change?

Could the user action that triggers the system change?

Could a human review step be added or removed?

Could it run on device instead of in the cloud?

Could it work in batch mode instead of real time?

Could it apply to text, image, audio, sensor, log, transaction, graph, or mixed data?

Could the output be a score, a class, a recommendation, an alert, a transformed asset, a control signal, a generated artifact, or a routing action?

Each answer grows the support base.

This is where AI shines because it is fast at suggesting technical alternatives. Not all suggestions will be good. That is fine. You are not accepting everything. You are using the system to expand the space, then filtering with engineering judgment.

A founder can do this manually, but AI makes it much easier to do at scale and without mental fatigue. It can generate five alternate paths, then ten more, then group them by type, then restate them in functional language, then show where your current draft is too narrow.

That kind of pressure testing is powerful.

Function-first thinking creates better support

One of the best ways to build support for broader claims is to describe components by what they do, not only by what they are.

This does not mean you stop naming concrete parts. It means you add a second layer.

For example, instead of only describing a “transformer-based classifier,” you also describe a “prediction component configured to determine a likely category based on one or more input features.”

Now you have not thrown away the detailed implementation. You still include it. But you also describe the function in a way that can support wider claiming later.

The same goes for system parts.

A cache might also be described as a temporary storage component.

A ranking model might also be described as a prioritization component.

A webhook trigger might also be described as an event-driven activation mechanism.

A vector index might also be described as a similarity-based retrieval structure.

A human QA screen might also be described as a verification interface.

The point is not to use stiff patent language for its own sake. The point is to express the invention at a functional level as well as an implementation level.

AI can help you do this systematically.

You can feed it a component list and ask for functional restatements. You can ask which functions are served by multiple possible implementations. You can ask which implementation details are too narrow if left alone. You can ask it to produce alternate wording layers from concrete to general.

This process is one of the fastest ways to expand support.

It lets the application say, in effect, “Here is one embodiment we built, and here is the broader function that embodiment serves, along with other ways that function can be carried out.”

That is exactly the kind of depth broader claims need.

Use AI to uncover hidden embodiments

A hidden embodiment is a version of the invention that is real and connected, but not yet written down.

Almost every startup has them.

They may be future versions the team already expects to build. They may be old versions tested during development. They may be rejected paths that still teach something useful. They may be customer-specific variants. They may be internal tools built for evaluation. They may be alternate deployment modes the engineers already know are possible.

These hidden embodiments are gold.

Why? Because they often expand support without forcing you to invent fantasy versions of the system. They are close to real work your team already did or seriously considered.

AI can help surface them by prompting the right memories.

Ask it to interview you about prior prototypes.

Ask it to identify what changed between version one and version three.

Ask it to point out where a manual step could be automated.

Ask it to list what parts of the system are already modular.

Ask it what substitute techniques could serve the same role.

Ask it what downstream actions might follow from the same core output.

Ask it how the same technical idea could apply across industries or data sources.

This matters because broader support should not come from fluff. It should come from believable, technically grounded variants. Hidden embodiments are often the cleanest source of that.

A good AI-assisted workflow can bring these out before the application is finalized. That is far better than realizing later that the broader story was there all along but never got captured.

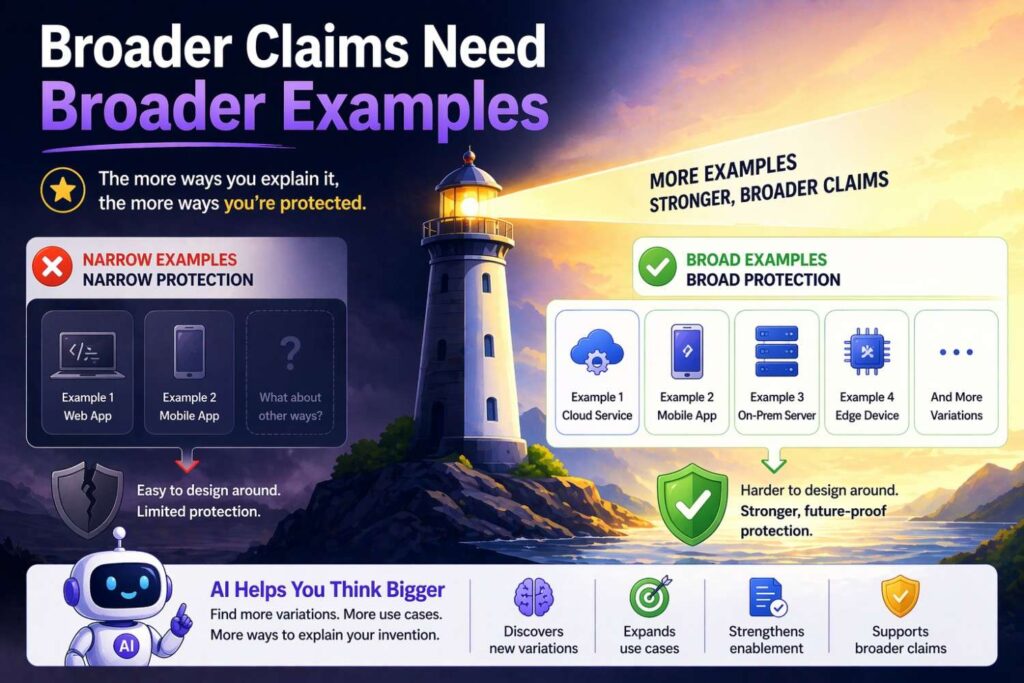

Broader claims need broader examples

One of the easiest ways to strengthen support is to give more examples.

Not random examples. Useful examples.

Examples help show that the invention is not trapped in one setting. They show that the core idea can work across contexts, forms, inputs, and outputs.

Suppose you built a system that uses sensor fusion to detect machine failure in industrial equipment. If your application only talks about one factory machine, that is a narrow lane. But if you describe how similar methods can apply across turbines, pumps, vehicles, robotics, HVAC systems, or remote field equipment, you begin to support a wider view of the invention.

The same principle applies in software and AI.

If your system improves retrieval for one enterprise search product, it may also apply to contract review, customer support knowledge access, medical note search, security triage, codebase navigation, or data room review, depending on the true technical contribution.

The example set matters because it tells the reader that the invention has range.

AI can help generate these example families quickly.

You can ask it to produce domain transfers. You can ask it to show how the same technical method would look in five adjacent settings. You can ask it to identify what stays the same and what changes in each setting.

That second part is crucial.

You do not just want more examples. You want each example to reveal the stable core and the flexible shell. The stable core is what supports broader claims. The flexible shell shows that the invention is not tied to one surface form.

This is one of the most underused moves in patent drafting, and AI makes it much easier to do well.

Expand around inputs, outputs, and decision points

If you want stronger support, three places deserve special attention: inputs, outputs, and decision points.

These are often where narrow drafting sneaks in.

Take inputs first.

Many applications mention only the exact data inputs used in the current product. But inputs are often more flexible than teams realize. The current system may use text and metadata, but maybe it could also use images, logs, telemetry, clickstream data, graph relationships, historical events, location signals, user profiles, or external context. Not all at once, and not without care, but enough to justify wider support if the invention allows it.

Now outputs.

Teams often draft outputs too narrowly too. They describe the current screen result or current API response, when the same technical method could output a recommendation, a confidence score, a generated command, a sorted list, a transformed representation, a route selection, a control action, or a stored state update.

Decision points matter just as much.

Where does the system decide? When does it branch? What thresholds matter? Can those thresholds be fixed, learned, adaptive, user-defined, system-defined, confidence-based, policy-based, or context-based? Can routing change based on source, timing, load, device type, risk level, or user role?

AI is useful here because it can model these dimensions and suggest alternative branches you may not have documented.

It can say, “This system currently acts after classification. Could it also act before classification by filtering inputs?” Or, “This workflow currently returns a ranked list. Could it instead select a single candidate or trigger a downstream process?” Or, “This threshold is currently static. Could it vary by account type or model confidence?”

Each of those questions can lead to more support.

The result is an application that does not just describe one straight path. It describes a decision-rich system with room to flex.

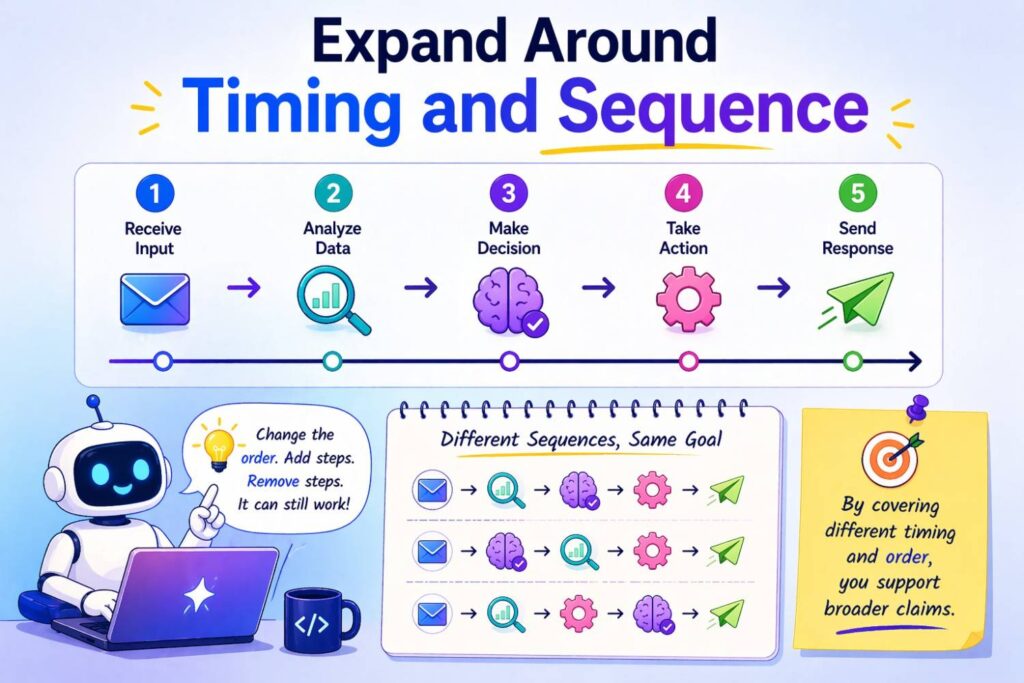

Expand around timing and sequence

This is a subtle but powerful move.

Many inventions are drafted as if the exact order of operations is fixed. But in many systems, timing and sequence can vary without breaking the core idea.

Maybe preprocessing happens before retrieval today, but could also happen after an initial candidate set is generated.

Maybe scoring happens in one pass now, but could be split into coarse scoring and fine scoring.

Maybe the system triggers on user action today, but could also trigger on a scheduled event or upstream signal.

Maybe model outputs are stored first and reviewed later, or reviewed first and then stored.

Maybe training happens centrally now, but adaptation happens locally later.

If the invention supports those forms, they can expand the written foundation for broader claims.

AI can help by taking a system flow and generating alternate orderings that preserve the core result. It can flag where the current draft accidentally sounds like sequence is mandatory when it is really optional. It can also identify where sequence truly is part of the invention, which is just as helpful. You do not want to generalize away something essential. You want to know what is flexible and what is not.

A strong patent draft often makes this clear in the description. It explains one flow in detail, then states that steps may occur in other orders, in parallel, conditionally, iteratively, or in response to different triggers where appropriate.

That kind of support can make a real difference later.

Expand around environment and deployment

Startups often change where their systems run.

A product that starts in the cloud may later move partly on device.

A system that begins as a SaaS workflow may later be embedded in an enterprise environment.

An internal tool may later become an API or SDK.

A desktop workflow may later become mobile.

A centralized model may later become edge deployed.

A human-assisted process may later become mostly automated.

A broad-support application should make room for these shifts where they fit the invention.

AI can help identify deployment alternatives and phrase them clearly. It can help founders avoid overcommitting to one environment just because that is where version one launched.

This matters more than many people think.

If the draft says the invention is a cloud server doing X, but the real concept could also live on a client device, in a local network node, in a hybrid system, or across distributed components, then broader support may depend on saying so.

The same goes for interfaces.

A system may be exposed through a UI today, but tomorrow it may be consumed by another service. The invention may not care whether a person clicks a button or an upstream system sends an event. If that is true, your support should say so.

This is where AI can be a useful sparring partner. It can ask, “Does this require a server?” “Does this require a user-facing screen?” “Does this require real-time execution?” “Could a batch system still perform the same function?” “Could the same logic be split across multiple devices?”

These questions push the draft out of today’s exact shell and into the wider invention space.

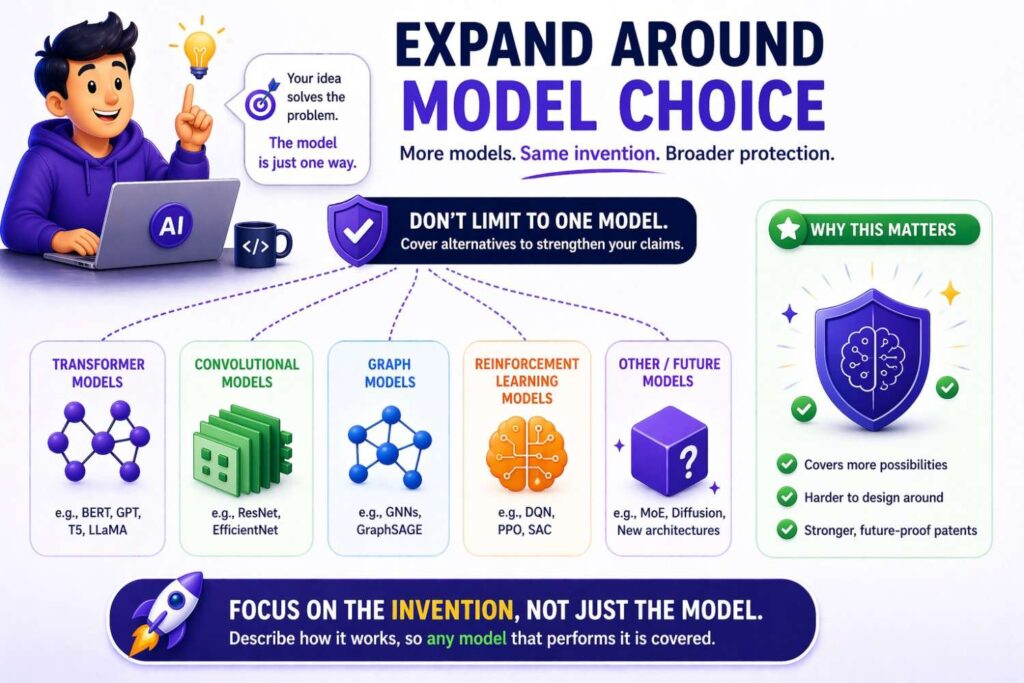

Expand around model choice in AI inventions

This is especially important for AI-heavy startups.

Many teams file patents around products that use a specific model family, embedding method, training process, ranking algorithm, or orchestration pattern. That is understandable, because those are the tools they used.

But model ecosystems change fast.

What is state of the art today may feel old in a year. If your patent support is too tied to one model choice, you may end up with claims that do not age well.

That does not mean you should be vague. It means you should separate the invention from the current model where possible.

Ask questions like these.

What function is the model serving?

Could another model type serve that function?

Does the invention depend on a particular architecture, or only on a capability?

Is the key value in how outputs are used, combined, constrained, or applied?

Is the key value in data preparation, feedback loops, memory management, action selection, quality control, orchestration, or deployment, rather than in the model itself?

AI can help founders unpack this.

For example, if your current system uses a transformer to classify support requests, AI can help you restate the invention in terms of prediction based on structured and unstructured features. If your system uses embeddings and vector search, AI can help identify whether the invention truly depends on that exact retrieval method or on a broader relevance-matching process.

This does not erase the specifics. You should still include them. Specifics prove real implementation. But you also want broader framing so the patent is not married to one technical fashion cycle.

This is one of the biggest practical wins of using AI in patent preparation for AI companies. It helps you describe the invention in a way that is durable, not frozen to the toolset of one month.

Expand around failure modes and edge cases

A founder often thinks edge cases are just engineering details.

In patent drafting, they can be powerful support material.

Why? Because they show the system is robust across more than one perfect scenario. They also reveal alternate logic paths and fallback structures that can support broader claim ideas.

Suppose your system acts one way when confidence is high and another way when confidence is low. That is useful. Suppose it uses a default path when data is missing. Useful again. Suppose it blends model output with rule-based constraints when policy limits are triggered. Also useful.

These branches help demonstrate the invention in richer form.

AI can assist by asking what happens when assumptions fail.

What if a signal source is missing?

What if a user does not provide enough context?

What if the model confidence falls below threshold?

What if latency is too high?

What if conflicting outputs are returned?

What if a downstream system is unavailable?

What if the device is offline?

What if the input format is noisy or malformed?

What if the inventory of candidate results is too small?

Every answer can add support.

This is one of the easiest ways to turn a thin draft into a more serious one. Instead of writing only the happy path, you write a system that can operate through real conditions.

That does two good things at once. It makes the invention feel more complete, and it expands the range of supported structures.

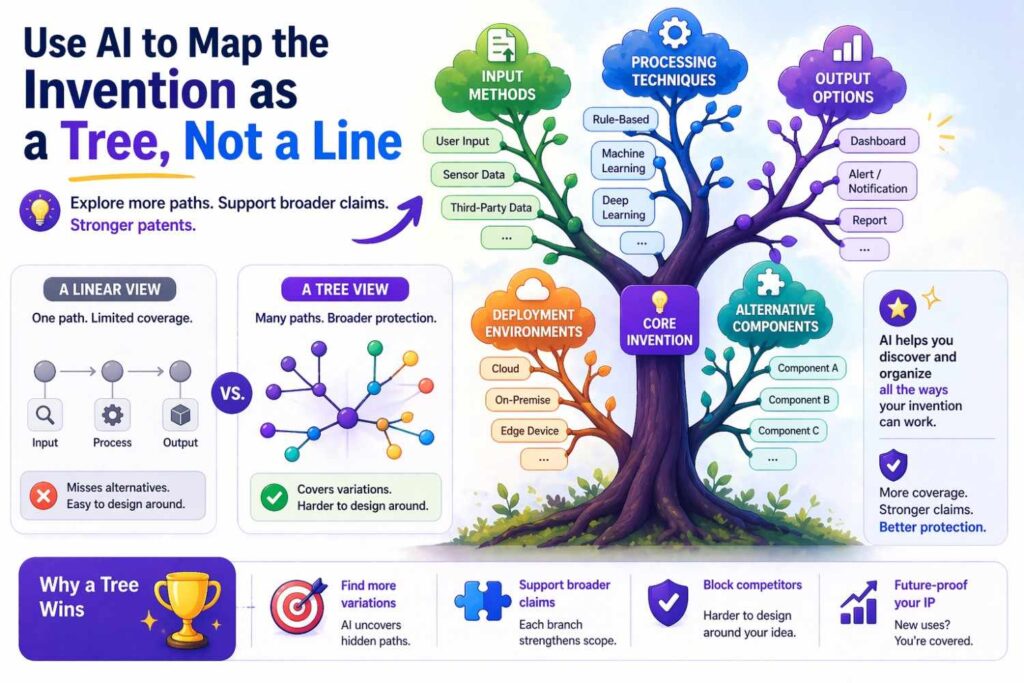

Use AI to map the invention as a tree, not a line

Many draft descriptions feel linear.

They read like a demo script. First this happens, then this happens, then this happens, and the result appears.

That is not wrong, but it is often incomplete.

A better way to think about support is as a tree.

At the trunk is the core inventive concept.

From there, branches spread into variations of inputs, components, flow paths, environments, outputs, fallback rules, and application settings.

AI is very good at helping build this tree.

You can ask it to take one system flow and generate a branching structure. One branch may vary how data is gathered. Another may vary where processing happens. Another may vary how results are selected. Another may vary what the system does with uncertain outputs. Another may vary who or what consumes the result.

This tree view changes the quality of the draft because it forces you to see the invention as a family rather than as a single demo.

That is what strong support often looks like.

Not endless random variants. A coherent tree built around the real core.

This also helps later when claim strategy comes into play. Once the support tree exists, different claim sets can anchor on different branches. But without the tree, you may only have one narrow lane to work with.

AI can help translate code and architecture into patent support

Many deep tech founders already have rich support hiding in technical materials.

It may live in code comments, design docs, tickets, diagrams, architecture notes, model cards, benchmark writeups, or onboarding docs. The problem is that none of those are written for patent support. They are written for building.

AI can help bridge that gap.

It can digest internal technical materials and pull out repeated structures, optional modules, alternate logic, system dependencies, processing stages, data transformations, and implementation differences across versions. It can identify what the team treats as modular, configurable, learned, rule-based, policy-controlled, or replaceable.

That is exactly the kind of material that can expand support for broader claims.

For example, a codebase may reveal that there are already adapters for multiple data sources. A design doc may reveal there are two ranking methods. A benchmark note may show an alternate feature pipeline was tested. A system diagram may reveal the processing could run in one service or three.

Those are not just engineering trivia. They are support assets.

Used carefully, AI can pull them together into a clearer invention map. Then attorneys can shape that into a patent application that reflects the real technical breadth of the system.

This is where modern patent workflows can save founders huge amounts of time. Instead of manually trying to translate scattered technical work into legal-ready material, you can use software to organize and expand that information before attorney review. That means less back and forth, fewer missed details, and a much better first draft. You can read more about that kind of workflow here: how PowerPatent helps founders file smarter.

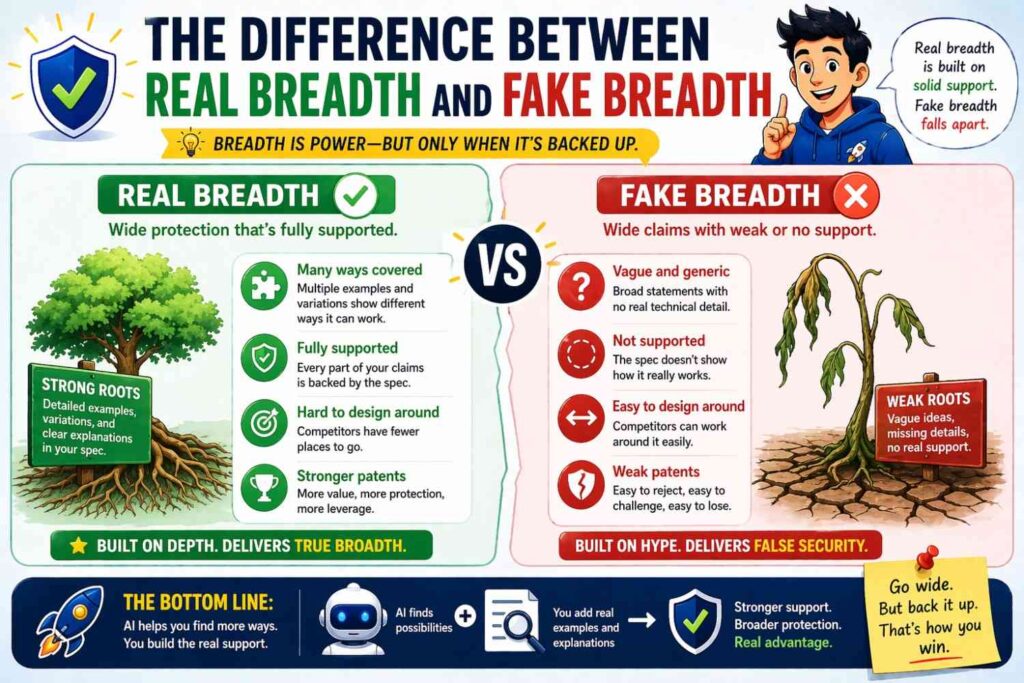

The difference between real breadth and fake breadth

This is important because not all broad language is useful.

Fake breadth is when the draft uses vague general words with no real technical depth behind them. It says things like “any suitable method may be used” or “the system may operate in various environments” without showing enough concrete substance to make those statements feel anchored.

That kind of breadth sounds large, but it is brittle.

Real breadth is different.

Real breadth comes from specific support across a range of connected embodiments. It gives examples. It gives alternatives. It identifies functions. It shows substitutes. It explains optional components. It captures variations in flow, data, environment, and use case.

Real breadth feels earned.

AI can produce both kinds, so you need to guide it well.

If you ask for “broader wording,” you may get fake breadth.

If you ask for “five technically plausible alternatives to this module, with explanation of what stays the same across them,” you are more likely to get real breadth.

The prompt style matters.

The review process matters too.

This is why AI alone is not the final answer. The strongest result comes when AI expands the invention record and experienced patent professionals shape that record into a real application. That mix gives you speed without losing judgment.

A practical AI workflow for expanding support

Let’s walk through a simple way a founder or engineering lead can use AI to improve support before filing.

Start with a plain explanation of the invention in your own words. Do not polish it. Just explain the system, the problem, and the solution.

Then ask AI to extract the core components, major steps, inputs, outputs, and decision points.

Next, ask which elements seem essential to the inventive idea and which seem like implementation choices.

Then ask for alternate implementations of each non-essential element that would still preserve the core.

After that, ask for other environments, industries, data types, and workflow contexts where the same core method could apply.

Then ask for edge cases, fallback paths, and confidence-based branches.

Then ask for alternate execution models such as batch, real-time, local, distributed, event-driven, scheduled, user-triggered, or system-triggered forms.

Then ask for alternate model types or algorithm classes that could perform the same function if the invention allows it.

Then ask AI to restate the invention at three levels: concrete implementation, functional system, and broader technical concept.

Then ask it to generate example embodiments grouped by what changes and what stays the same.

Only after doing that should you ask for support language or draft sections.

This is not complicated. It is just structured. That structure helps you move from “here is what we built” to “here is the wider invention space we can support.”

Done well, this process gives attorneys much better raw material to work with. It also helps founders feel more confident that their application actually reflects the full value of what they created.

Why this matters so much for startups

A startup’s first patent filing often carries more weight than people realize.

It may shape later continuations. It may influence investor confidence. It may affect freedom of action as the product evolves. It may become the base you return to for years.

If that first filing is too narrow, the cost is not just one weak document. The cost can ripple forward.

That is why better support matters.

Broader support does not guarantee the broadest possible claim outcome. Nothing does. But it gives you options. It gives your patent team more to work with. It gives future claim strategy a stronger foundation. It reduces the risk that your application gets trapped in the exact version you happened to launch first.

For startups, that flexibility matters because startups pivot, expand, repackage, and redeploy faster than large companies. The invention may outgrow version one very quickly.

AI can help founders file in a way that matches that reality.

Instead of treating patenting as a slow side project, they can treat it as part of technical capture. They can preserve more of the invention when the details are still fresh. They can build a stronger base without spending endless hours translating engineering work by hand.

That is one reason many fast-moving teams are choosing systems built for founders rather than traditional patent workflows. A software-first process with real attorney oversight can help you move quickly while still aiming for serious protection. Here is the place to learn more: PowerPatent’s workflow.

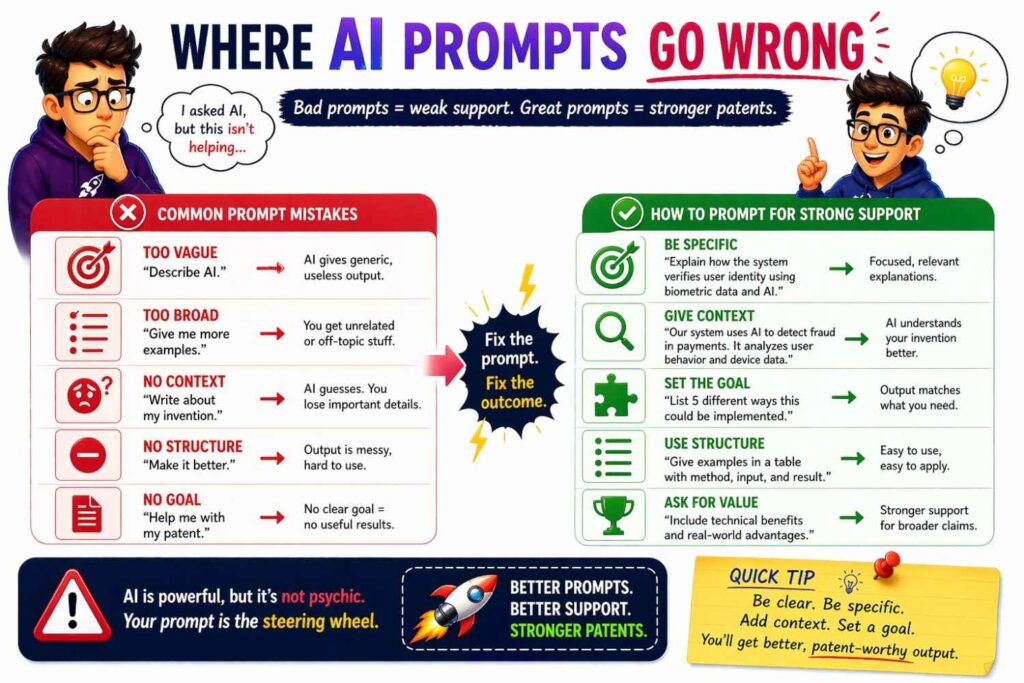

Where AI prompts go wrong

It helps to be honest about the bad patterns too.

One bad pattern is asking AI to “write broad claims” before giving it enough material. That often produces language that sounds impressive but is not grounded.

Another bad pattern is feeding AI a product page and expecting a solid invention disclosure. Product pages are usually too shallow. They focus on benefits, not the real technical structure.

Another bad pattern is letting AI collapse everything into generic phrases. If every component becomes “a module” and every action becomes “processing,” you may lose the specific technical detail that gives the application strength.

Another bad pattern is accepting every variant AI suggests. Some will be nonsense. Some will be too far from what you actually invented. Some may point toward unrelated territory. The goal is not maximum expansion at any cost. The goal is connected, technically sound expansion.

A final bad pattern is skipping human review. AI can accelerate thinking and drafting, but it should not be the final judge of what your patent application needs.

The best approach is disciplined use.

Use AI to ask better questions.

Use AI to expand the technical record.

Use AI to identify weak spots.

Use AI to generate plausible variation sets.

Then use real expert review to turn that into filing-ready work.

How to tell whether your current draft is too narrow

Here is a simple test.

Read the draft and imagine your product changes in a major but realistic way next year. Could the current description still comfortably cover that new version?

If the answer is no, the support may be too narrow.

Ask a few more questions.

If you replaced the current model with another model type, would the draft still fit?

If you moved from cloud to edge, would the draft still fit?

If you added a human review layer, would the draft still fit?

If you changed the input format, would the draft still fit?

If you used the same method in a new industry, would the draft still fit?

If you split one service into several, would the draft still fit?

If you merged several steps into one, would the draft still fit?

If you switched from ranking to threshold-based action, would the draft still fit?

These are not claim questions first. They are support questions.

AI can help run this test quickly. It can simulate product evolution and show where your current description breaks. That kind of gap analysis is very useful before filing. It is much better to fix a thin description early than to realize later that your own future roadmap no longer matches the story you put on file.

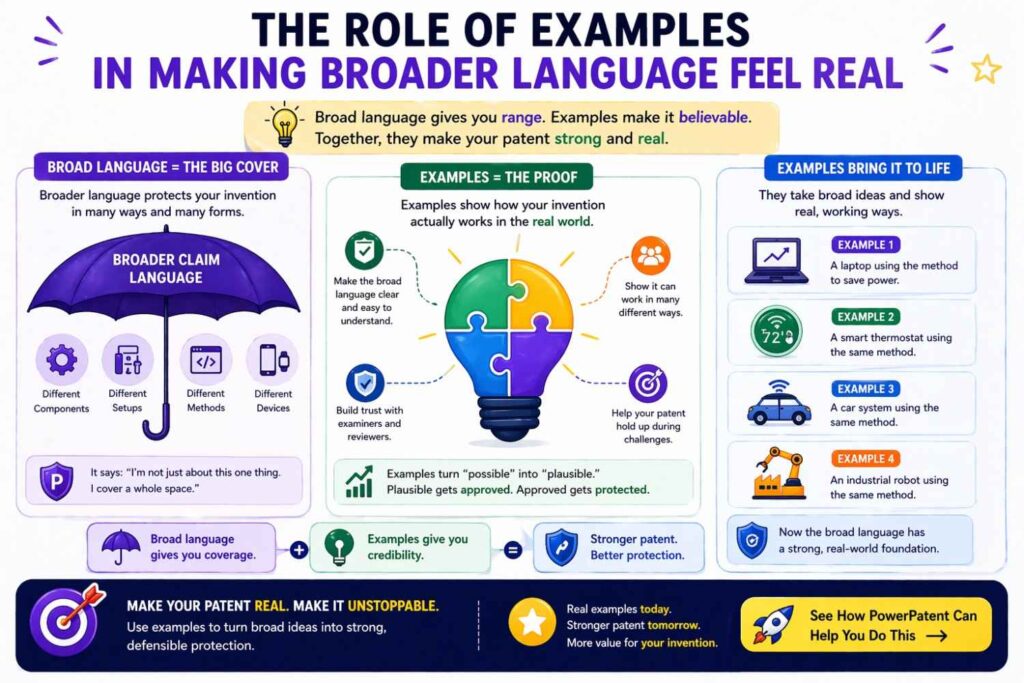

The role of examples in making broader language feel real

When broader language is paired with examples, it becomes easier to trust and easier to use.

For example, saying “the system may use one or more machine learning models” is okay.

But saying that the system may use a classification model, a ranking model, a similarity model, a sequence model, or a hybrid model depending on task structure and available input types is much better.

It still keeps room open, but it feels connected to real technical practice.

The same goes for deployment.

Saying “the system may be implemented in various computing environments” is broad.

Saying the system may execute on a server, a client device, a local gateway, a distributed service set, or a hybrid environment with portions executed across multiple nodes is better.

Examples do not trap you. They often strengthen you.

AI is good at generating example sets when prompted correctly. Ask it for technically credible examples tied to the function being served. Ask it to avoid generic filler. Ask it to explain why each example fits the core invention.

That approach helps create support that is both broad and believable.

Broader claims often begin with better naming

Naming seems small, but it matters.

When founders name invention pieces too narrowly, the whole draft starts to shrink.

If you call something the “email scoring model” throughout the application, you may quietly frame the invention as being about email even if the method could apply to many message types.

If you call something the “video moderation pipeline,” you may narrow the mental frame even if the real method could work for images, audio, or mixed media too.

Better naming creates room.

Maybe that “email scoring model” is really a message-priority determination component.

Maybe that “video moderation pipeline” is really a content analysis workflow.

You can still mention email and video as examples. You can still provide those detailed embodiments. But the naming at the core should reflect the actual inventive center.

AI can help identify narrow naming in a draft and suggest broader but still accurate replacements. This is one of those low-effort, high-return improvements that many teams overlook.

The right names make it easier to draft support at the right level from the start.

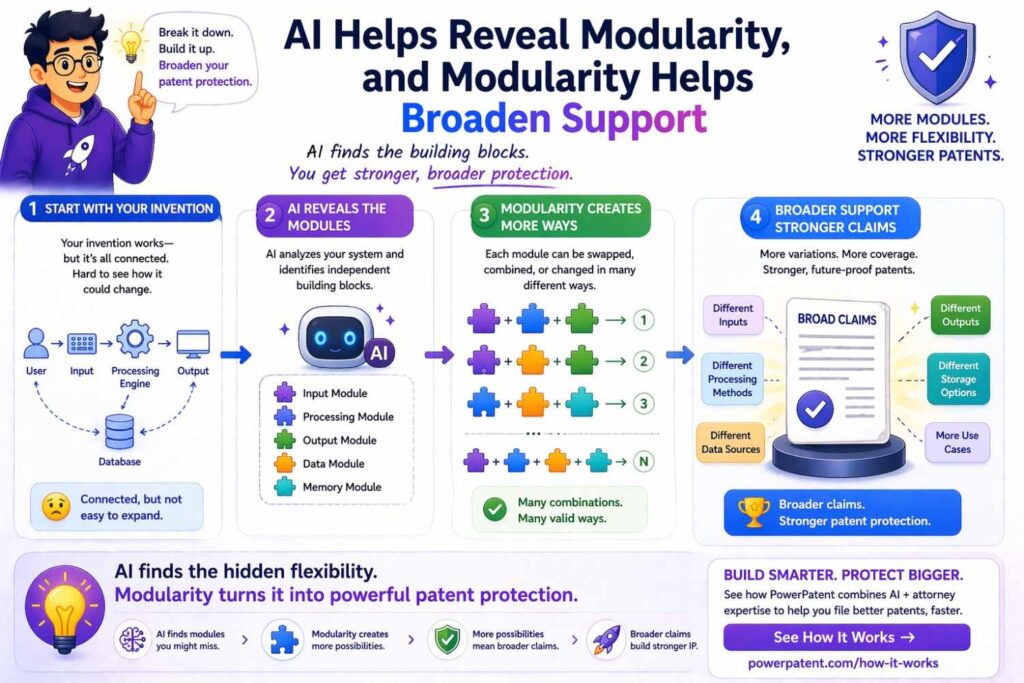

AI helps reveal modularity, and modularity helps broaden support

A system becomes easier to support broadly when its pieces are understood as modules with roles, not just as one fixed pipeline.

This does not mean your actual code must be perfectly modular. It means the invention can often be described in modular terms if the functions are separable.

For example, you may have an input processing stage, a context gathering stage, a relevance stage, a risk stage, a decision stage, and an action stage. In the product, these may blur together. But for support, it can be useful to describe them as modules or logical portions.

Why?

Because modularity makes alternatives easier to express. If the context gathering stage can use different sources, say so. If the decision stage can combine rules and model output in different ways, say so. If the action stage can take different downstream actions, say so.

AI is especially good at spotting modular structure in technical explanations. It can take a messy product walkthrough and divide it into logical blocks. Then you can ask how each block could vary while preserving the core method.

That is a powerful support-building exercise.

Support for broad claims is often built by asking “why” three times

This is a simple technique and it works.

Start with one implementation detail and ask why it exists.

Suppose your system uses a confidence threshold of 0.82 before auto-action.

Why?

Because you only want automation when the output is reliable.

Why?

Because the real invention balances system efficiency with action quality.

Why?

Because the system adapts action behavior based on estimated output trustworthiness.

Now you are closer to the broader concept.

The exact threshold is not the invention.

Even the exact reliability calculation may not be the invention.

The deeper idea may be confidence-conditioned action control.

That broader idea can then be supported with many forms: different thresholds, learned thresholds, per-account thresholds, confidence bands, review routing, holdback actions, different classes of downstream response.

AI can help run this “why” process across many parts of the system. It helps founders move past surface implementation and find the deeper structure worth supporting.

This technique is especially useful when the draft feels too tied to tuning values, architecture labels, or narrow product names.

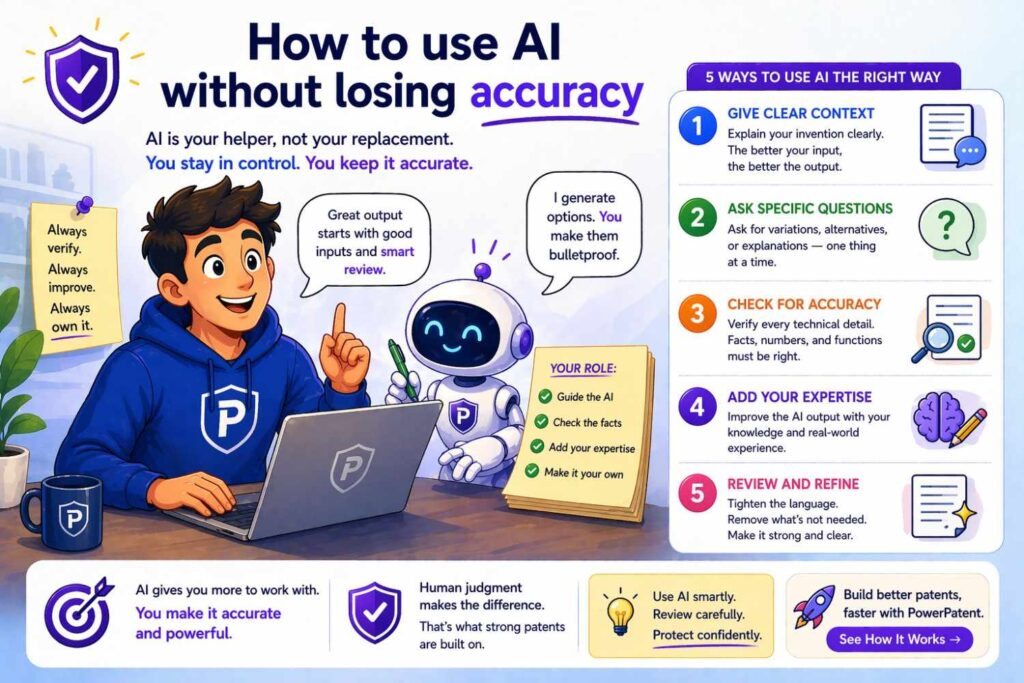

How to use AI without losing accuracy

The answer is simple: keep grounding everything in your real system.

Do not ask AI to invent a bigger invention than you have.

Ask it to expose the width already latent in what you built.

That is a very different mindset.

Grounding can come from code, diagrams, experiments, architecture notes, design tradeoffs, roadmap paths your team already considered, and engineering reasoning about what could be swapped without changing the core function.

The more grounded your inputs, the better the output.

It also helps to keep a rule like this: every broadening move should answer one of two questions.

Either it is something the team already built, tested, or seriously considered.

Or it is a technically plausible variant that a skilled engineer would recognize as serving the same role in the same invention framework.

That filter keeps the expansion honest.

Then, before anything becomes part of the filing package, review it with the people who know the system best and with experienced patent counsel.

That combination is what turns AI from a text toy into a real drafting advantage.

Case pattern one: the founder who drafted too close to the UI

This is common in software startups.

The founder explains the invention through the user interface because that is what they demo every day. The draft ends up focused on screens, buttons, user flows, and visible outputs.

But the real invention is often deeper in the engine.

Maybe the novel part is how context is assembled. Maybe it is how candidate actions are scored. Maybe it is how a model output is constrained before release. Maybe it is how multiple data layers are fused.

AI can help shift focus from surface UI to technical mechanism.

It can ask what happens behind the screen. It can ask how the result is formed. It can separate presentation from core processing.

This matters because UI-heavy drafting often leads to narrow support. The product may later ship through an API, embedded interface, automation tool, or background service, but the original description stays trapped in visible workflow language.

A better support strategy describes the UI embodiment in detail while also describing the underlying technical method independent of the screen.

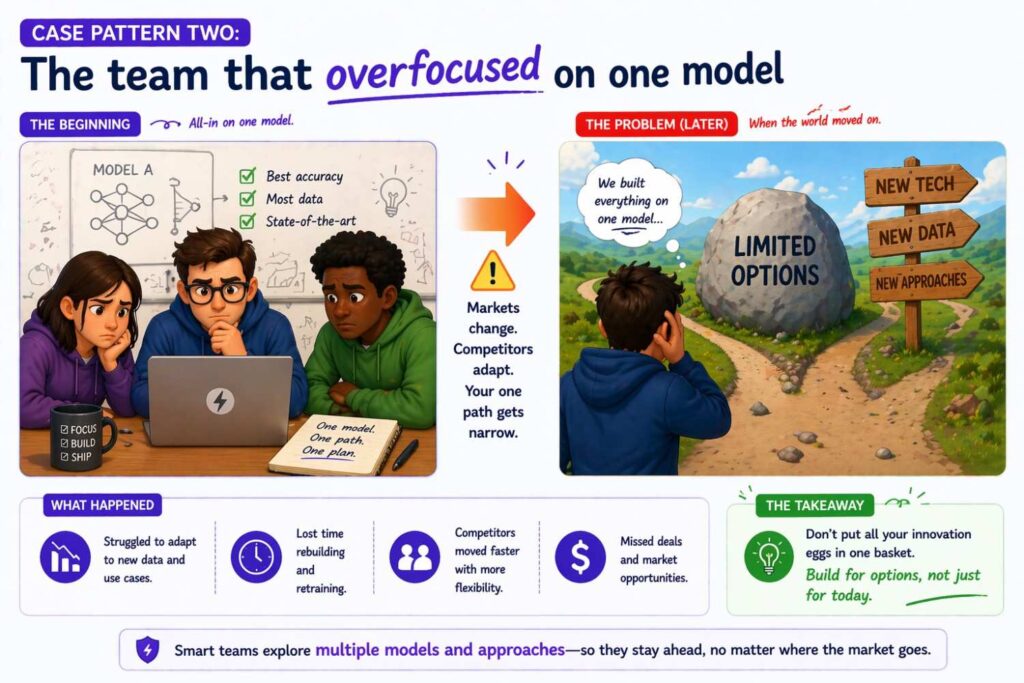

Case pattern two: the team that overfocused on one model

Another common pattern appears in AI companies.

The team is proud of a specific model setup, and rightly so. They write the whole disclosure around it. The prompt chain, fine-tuning path, retrieval method, post-processing logic, and orchestration stack all get described with exact labels.

That can be useful, but it can also become too narrow.

The real invention may lie in how the system controls output quality, merges context layers, routes work based on confidence, adapts to user state, or constrains generation using internal policy logic. Those ideas can outlive the current model choice.

AI can help separate the stable invention from the current model stack. It can identify what truly requires the present setup and what could survive a model swap. That distinction is critical for support that ages well.

Case pattern three: the hardware team that forgot software alternatives

In hardware and robotics, teams often draft from the physical build. They describe the exact sensor layout, circuit path, actuator type, or device body.

That is important. But support may be stronger if the draft also captures software control alternatives, signal processing alternatives, calibration alternatives, and data interpretation paths.

Maybe a sensor reading could be handled by local logic or remote logic.

Maybe actuation could be automatic or operator-assisted.

Maybe the same physical event could be detected through thresholding, pattern matching, learned models, or sensor fusion.

AI can help uncover these alternative technical paths around the physical design. That creates broader support while staying faithful to the actual invention.

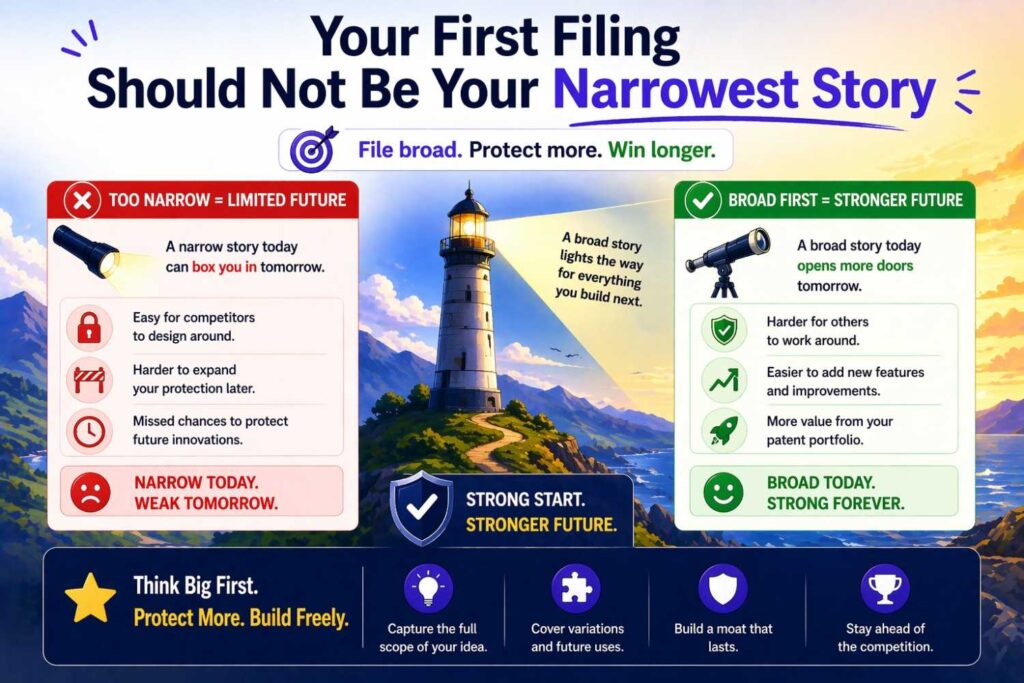

Your first filing should not be your narrowest story

Many founders assume the first patent filing should be the most conservative version. They think they should only say the bare minimum they can “prove” right now.

That instinct is understandable, but it often leads to weak support.

Your first filing should be grounded, yes. It should be accurate, yes. But it should also capture the wider technical family around the invention where that family is real and supportable.

Why?

Because later filings often depend on the foundation you already laid. If the first application is too skinny, you may have fewer options later than you hoped.

AI helps here because it can expand the first record without making the process unbearably slow. It helps you gather more while the technical memory is fresh. That is a major advantage for startups that cannot afford to revisit old work from scratch every few months.

Why attorney oversight still matters

It matters because support and claim strategy are not the same thing.

AI can help you build the support base. It can help uncover breadth. It can help organize embodiments. It can help translate technical work into structured material.

But deciding how to frame claims, how to balance breadth and defensibility, how to avoid unnecessary traps, and how to shape the filing in view of patent practice still requires judgment.

That is why the best setup is not AI alone. It is AI plus real attorney review.

That combination gives founders the best of both worlds: speed and depth from software, and experienced legal strategy from humans who know what strong patent protection requires.

That is also why platforms designed around that model can be so useful. You do not have to choose between a clunky traditional process and a fully DIY process. You can use software to pull out the invention and still get real professional oversight before filing. This is exactly the kind of modern workflow founders should understand: see how PowerPatent works.

A founder-friendly way to think about claim breadth

Do not think of broad claims as magic language.

Think of them as a business lever that depends on technical preparation.

If the support underneath is rich, you create room for stronger protection.

If the support underneath is thin, then broad wording becomes risky, fragile, or simply unavailable.

That means the work happens earlier than most people think.

It happens when you document the variants.

It happens when you capture alternate flows.

It happens when you explain functions, not just labels.

It happens when you include multiple embodiments.

It happens when you separate the core invention from version-one implementation details.

It happens when you use AI as a multiplier for invention capture, not just a text generator.

That is the big idea.

A good internal exercise before you file

Before the draft is finalized, gather the founder, one engineer who knows the system deeply, and whoever is helping prepare the patent. Then walk through these questions in normal language.

What part of the system do we believe is truly new?

What parts could change next year without changing that new idea?

What alternate methods did we test or discuss?

What assumptions are we making in the current draft that may not need to be fixed?

What other kinds of data, outputs, or deployment paths could fit the same invention?

What happens when the system is uncertain, partial, or under stress?

What would a competitor change first if they wanted to copy the idea without matching our exact implementation?

That last question is especially good.

Competitors rarely copy you line for line. They change the obvious surface details and keep the value. A strong support strategy anticipates that by documenting more than the exact current build.

AI can help prepare for this session by generating candidate answers, surfacing missing areas, and showing where the current draft is still tied too closely to the present product.

What founders should stop doing right now

They should stop assuming a patent draft is broad just because it uses broad words.

They should stop describing only the polished product story.

They should stop thinking the current code path is the only path worth documenting.

They should stop waiting until every product detail is final before starting invention capture.

And they should stop using AI only to polish prose.

The real advantage of AI is upstream. It helps you discover, widen, structure, and pressure test the invention record before the filing is locked.

That is where the real value sits.

What founders should start doing instead

They should start capturing invention depth while building.

They should start treating technical alternatives as valuable support material.

They should start asking AI for variants, functions, edge cases, and alternate embodiments.

They should start reviewing drafts with the future product in mind, not just the current release.

They should start using workflows that combine software speed with attorney review so they do not have to choose between moving fast and doing it right.

That kind of process is exactly what strong startups need when IP matters but time also matters. If that sounds like your situation, this is the best next step: learn how PowerPatent works.

One of the smartest things founders can start doing is treating patent prep like an ongoing business habit instead of a last-minute writing project.

Most teams wait until they are almost ready to file. By then, key details are already gone. Engineers forget why certain choices were made. Old experiments are hard to find. Early versions that reveal the real inventive leap are buried in Slack, tickets, or old branches. That creates a weaker record than the business actually deserves.

A better move is to build a simple invention capture habit into the product cycle.

At the end of each major sprint, release, or model update, someone on the team should answer a few business-focused questions in plain English.

What changed technically? What problem did that change solve? What options were considered and rejected? What part of the system now gives the company a stronger edge? What workarounds would a competitor likely try if they wanted similar results without copying the exact product?

This kind of internal record becomes very useful later. It helps the business spot patent-worthy work earlier. It also makes broader support easier because the team is not forced to recreate months of technical thinking from memory.

AI can be used here in a very practical way. It can take product notes, architecture updates, and engineering summaries and turn them into a clean internal invention log. That gives leadership a much clearer view of which technical advances are worth protecting before they become old news.

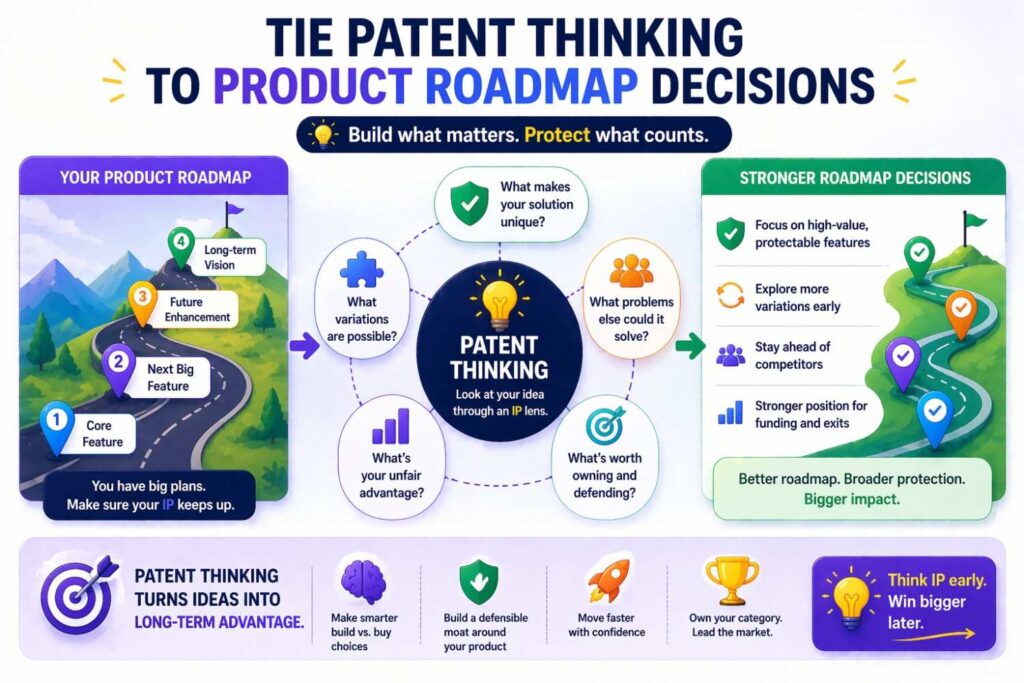

Tie patent thinking to product roadmap decisions

Founders should also start linking patent strategy to roadmap strategy.

This is where many businesses miss a big opportunity.

A roadmap is not just a build plan. It is also a clue about where future value will sit. If the company already knows it may expand into new workflows, new customer types, new deployment models, or new data sources, that knowledge should shape how support is built now.

This does not mean filing on random future dreams. It means looking at the roadmap and asking a sharper question: which upcoming changes are natural extensions of the invention we already have?

That is where broader support becomes a business asset.

For example, if a company knows it will move from one vertical into three adjacent ones, the support should not stay locked to the first market alone. If the team knows a cloud workflow will later be embedded into enterprise systems, the filing should not read as if the invention only lives inside a stand-alone web app. If the company expects to move from human-in-the-loop review toward more automation, that progression should be reflected in the support where it truly fits.

This is highly actionable. Before each filing, leadership should review the next twelve to eighteen months of likely product evolution and mark which changes are true growth paths of the same invention. Those paths should then be discussed during patent prep. That helps the business protect not just current revenue, but future expansion room.

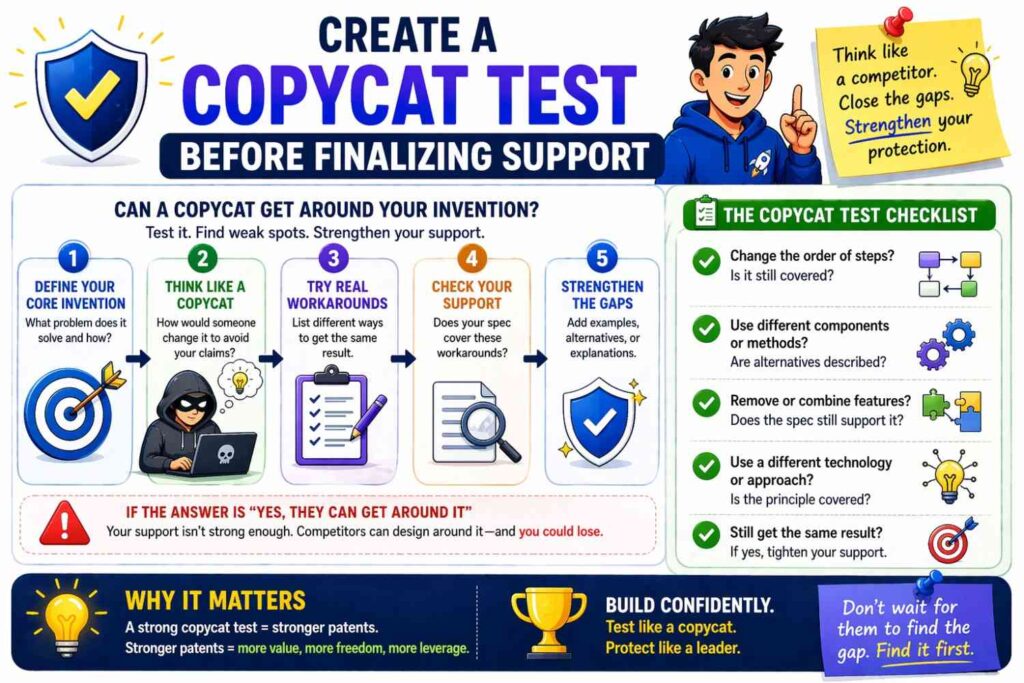

Create a “copycat test” before finalizing support

A very useful thing founders should start doing is running what can be called a copycat test.

This is simple, but very strategic.

Ask the team to imagine a well-funded competitor sees your product, understands why customers like it, and wants to copy the value while changing surface details. What would they swap first?

They may change the model type. They may change the interface. They may move processing to a different layer. They may swap a rule engine for a learned system. They may use a different source of input data. They may turn a visible feature into a background workflow.

That exercise reveals where your current support may be too tied to form instead of function.

This is one of the best internal business exercises a startup can run because it aligns patent work with real market behavior. Competitors rarely clone the whole product exactly. They usually copy the core business value while changing the technical wrapper enough to create distance.

AI can help the team run this test with more depth. It can generate likely workaround paths based on the invention summary. It can suggest what parts of the current draft a competitor could sidestep. That helps the company tighten weak spots before filing instead of discovering them after a rival appears.

Assign one person to own invention quality across teams

Another smart move is to give invention capture a real owner inside the business.

This does not mean creating a heavy legal process. It means having one trusted person responsible for making sure technical value does not get lost between engineering, product, and leadership.

In many startups, nobody owns this. Engineers are busy building. Product leads are focused on shipping. Founders are handling growth and fundraising. Patent work becomes fragmented. Important details never get pulled together.

A strong business practice is to assign one person to collect invention signals across the company. This person does not need to write the patent alone. But they should know how to spot real technical differentiation, gather relevant material, and make sure the right conversations happen early.

That person can maintain a simple system. They can flag promising launches. They can collect design decisions. They can ask what alternatives were tested. They can make sure patent counsel gets richer inputs. They can use AI tools to turn scattered internal material into organized invention summaries.

For a startup, this is a very high-leverage role. It reduces missed opportunities, improves consistency, and creates a better bridge between product strategy and IP strategy.

Turn engineering decisions into business protection

Founders should start treating engineering tradeoffs as possible protection assets.

A lot of valuable support hides in decisions the team already made.

Maybe the company chose a certain control flow because it reduced latency without hurting output quality. Maybe it blended rule logic with model output because that gave better trust in real-world use. Maybe it changed how data is grouped, filtered, or stored because that improved action speed, reliability, or cost.

Those decisions matter. They are not just internal implementation notes. They may be the reason the system works better in the market.

Businesses should start documenting not only what the system does, but why certain technical choices created a real advantage. That makes patent support more strategic because it ties the invention to business impact.

A helpful internal practice is this: when a technical change improves speed, cost, quality, reliability, safety, or scale, ask whether the mechanism behind that improvement has patent value. Then use AI to help map alternate versions of that mechanism so the support is not limited to only one exact implementation.

This helps the company protect the real engine of advantage, not just the visible feature customers see on the screen.

Build filing prep around commercial risk, not just technical pride

Founders should also start deciding what to emphasize based on business risk.

Teams often overfocus on the part of the technology they think is smartest. But the most brilliant technical component is not always the part that matters most for business protection.

Sometimes the highest-risk area is the part that competitors can most easily copy once the market proves demand. Sometimes it is the workflow that creates customer lock-in. Sometimes it is the orchestration layer that makes a model usable in a real business setting. Sometimes it is the system that reduces cost enough to change the economics of the category.

That is why founders should ask: if a competitor copied one thing from us in the next year, which technical area would hurt the most?

That question helps the business focus its support-building efforts where they matter most.

This is a powerful way to prioritize. It prevents patent work from drifting toward technical vanity. It pushes the team to protect what carries real commercial weight.

AI can support this process by helping categorize which parts of the system are easiest to imitate, hardest to detect, easiest to redesign, or most central to customer value. That makes the patent conversation far more strategic.

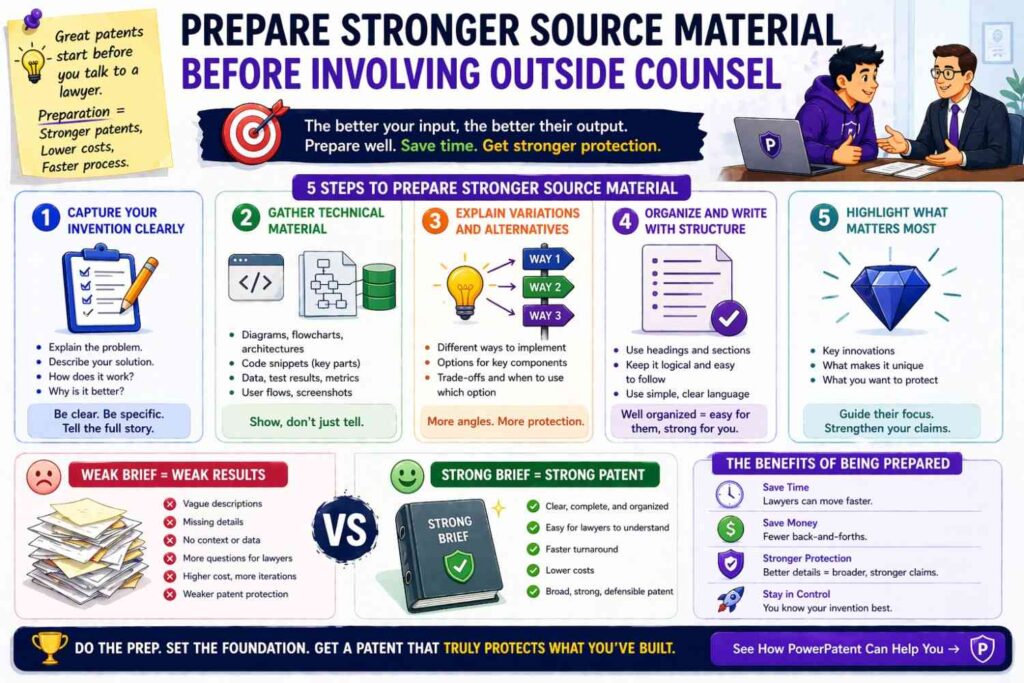

Prepare stronger source material before involving outside counsel

A very actionable step businesses should start taking is improving the quality of what they hand to patent counsel.

Many teams send scattered notes, a demo deck, and a rough explanation. Then they hope the drafting process will somehow discover all the hidden value in the invention. That is asking too much from a thin starting point.

A better approach is to prepare a compact but rich invention packet before the drafting process begins.

That packet should explain the problem, the technical solution, the current implementation, important alternatives, known future extensions, edge handling, and why the system creates a business advantage. It should also include diagrams, sample workflows, and any internal notes that reveal modularity or alternate paths.

AI can make this much easier. It can take raw internal materials and help turn them into a structured invention summary that is more complete, more useful, and much easier for counsel to work from.

This creates a real business advantage. Better inputs usually lead to better drafts, less rework, fewer missed details, and a faster path to a filing the company can feel good about.

Treat each patent filing as a platform, not a single document

Businesses should start thinking of each filing as a platform for future protection.

This mindset changes how teams prepare support.

If a filing is treated as a one-off document, teams often aim for basic coverage and move on. But if a filing is treated as a platform, the goal becomes building a strong base that can support later claim paths as the company grows.

That means asking early which parts of the invention may branch into later versions. It means capturing enough depth that future claim strategy has room to move. It means making sure the written support is not so narrow that the company has to start over every time the product evolves.

This is a very strategic business move because startups rarely stay still. A good patent foundation should make later expansion easier, not harder.

AI can help by mapping the invention into clusters of related embodiments and showing where the current support is rich enough for future branching and where it is still too thin. That kind of visibility is useful for leadership because it turns patent prep into a longer-term asset-building exercise.

Make invention reviews part of leadership rhythm

One more thing founders should start doing is discussing invention protection during normal leadership reviews.

Not in a vague way. In a business way.

When product leaders review roadmap progress and technical wins, there should be a regular moment to ask whether any recent work created defensible technical value worth capturing. This should not feel like a legal interruption. It should feel like protecting business leverage.

That simple habit helps companies catch strong filing opportunities earlier. It also helps leadership see IP as part of company building rather than as an outside process that appears once or twice a year.

A smart rhythm is to review major technical changes once each quarter and ask which ones changed the company’s strategic position. AI can help prepare this review by scanning internal materials and surfacing candidate inventions, key technical differences, and possible support-expansion areas.

That makes the discussion faster and more grounded.

Move from reactive patenting to proactive protection

The deeper shift is this: businesses should stop patenting only when someone remembers to do it.

They should build a proactive system.

That system should capture invention value early, connect it to roadmap direction, test it against likely competitor workarounds, and turn technical insight into stronger support before the filing window closes.

That is how smart companies protect growth.

It is also how founders avoid the common mistake of filing something that describes the product they had, rather than the business they are becoming.

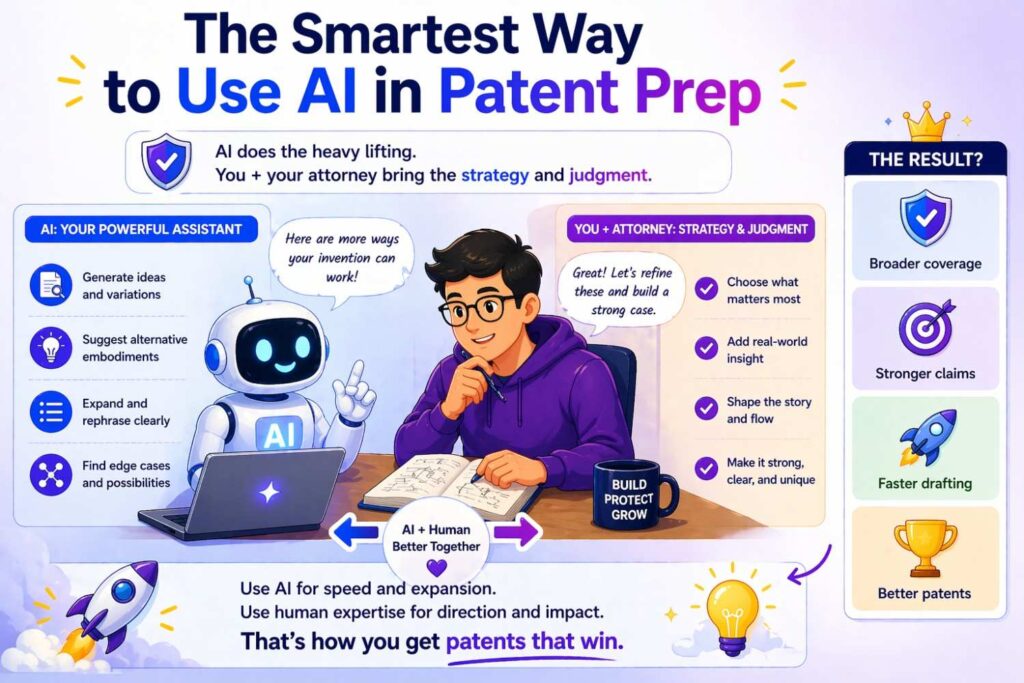

The smartest way to use AI in patent prep

Use it as a mirror, a mapmaker, and a pressure tester.

As a mirror, it reflects your invention back to you more clearly than a rushed internal note ever could.

As a mapmaker, it shows the broader territory around the exact build you have today.

As a pressure tester, it reveals where your draft is too narrow, too specific, too UI-bound, too model-bound, or too tied to one environment.

That is the role where AI is most powerful.

Not replacing thought.

Not replacing judgment.

Multiplying both.

Final thought

If you want broader claims, do not start by reaching farther with the claims.

Start by giving those claims more ground to stand on.

That means capturing more of the invention family. More embodiments. More alternate paths. More functional framing. More examples. More edge cases. More deployment forms. More substitutes. More technical depth.

AI can help you do that faster and more completely than older patent workflows ever could.

But the best results come when that AI-driven expansion is paired with real human expertise. That is how founders protect not just what they launched, but what they are truly building toward.

And that is the whole point.

If you are building something valuable and want a patent process that helps you capture the full technical story without slowing the company down, take a close look at PowerPatent. It is built for founders who want stronger patents, less friction, and real attorney-backed support.