Recently, there have been many articles and posts discussing ChatGPT and its potential to draft patent claims. But can such a model really deliver on its promises?

ChatGPT is a language-model AI system trained on large amounts of text. It uses neural nets to generate plausible sequences based on what it’s been shown but lacks the capacity to differentiate between fact and fiction.

With the recent spike of interest in ChatGPT, a natural question arises: can ChatGPT draft good patent claims?

To put this to the test, we ask ChatGPT directly and the answer is as follows:

Next, we ask ChatGPT to write a sample claim on flying cars, here is what it generates:

Next we ask ChatGPT what it thinks about its claims:

ChatGPT notes that: “ChatGPT-generated claims may not always meet these requirements. For example, ChatGPT may produce claims that are ambiguous, imprecise, or overly broad, making it difficult to determine the scope of the claimed invention. Additionally, ChatGPT may not fully capture the technical nuances of the invention, leading to claims that are incomplete or inaccurate.”

We then ask if ChatGPT can write 101-compliant claims. This is where ChatGPT starts hallucinating a bit:

Next we discuss a few issues to consider when using ChatGPT to draft your claims.

Accuracy of Output

ChatGPT is a machine learning system designed to generate text from an extensive language model trained through both supervised and reinforcement learning techniques. Experts say the platform could be beneficial for many tasks, such as product descriptions, personalized marketing campaigns, and predictive maintenance.

It also has some notable shortcomings. One is its tendency to provide accurate but plausible answers. For instance, if asked to write a biography of a public figure, it may insert inaccurate biographical data.

Another issue with Siri is its tendency to get facts and calculations wrong. For instance, if you ask it a math question, it may incorrectly calculate the answer.

This isn’t just an anomaly: Generative AI systems such as ChatGPT have long been known for their tendency to produce text that “fits the pattern” of what’s available in the real world. Part of what makes them impressive is their capacity for taking concepts and ideas in unexpected directions.

But that doesn’t guarantee all the facts and calculations they present are correct; in fact, it could very well be that they are incorrect.

Due to its human-like qualities, it can be challenging to draw a clear line between what is and isn’t “convincing” in certain scenarios. Unfortunately, as technology continues to advance, this difficulty will likely persist.

ChatGPT has already achieved some remarkable achievements, despite these limitations. It represents a monumental advancement in machine learning technology and could have far-reaching effects on how we live our lives. It truly represents an awe-inspiring breakthrough!

Indefiniteness

ChatGPT’s impressive text generation performance is not without its limitations. It cannot answer questions that are phrased specifically, and it may produce ambiguous answers that don’t make sense or are overly verbose. Ultimately, these limitations must be taken into consideration before using this program for text generation.

This is due to a fundamental distinction between large language models (LMMs) and traditional Turing machines. In a traditional Turing machine, each computational element processes results only once; on the other hand, in a GPT model, the results of an original input are “rippled” through multiple layers of the network, with each neuron doing its thing and then passing them along to neurons on subsequent layers.

Though a large language model can generate detailed and comprehensive claims, it may still lack enough understanding of an invention to draft a patent application. Therefore, patent attorneys must be cautious to not disclose confidential information to publicly-accessible large language models. Any inadvertent disclosures could result in lost patent rights or time bars if utility applications aren’t filed within one year of disclosure.

ChatGPT is still in its early stages and has yet to reach artificial general intelligence (AGI), the capacity for learning any intellectual task a human can undertake. To reach AGI, a hybrid model must be created that incorporates symbolic AI with machine learning techniques.

This hybrid approach, which is being explored by several companies, has the potential to bridge low-level data-intensive perception and high-level logical reasoning. Studies have also shown it to increase the reliability of generative AI systems. In the future, experts hope such a model will serve as the precursor to much more advanced AI systems capable of understanding and learning anything a human being can.

Section 101 Issues

When crafting patent claims, it can be tempting for inventors to attempt to maximize the scope of their claims. However, this could actually work against the objectives of the patent system; its purpose is to safeguard only patentable inventions so inventors should only claim what they have actually created.

One way to prevent this is to employ a more stringent test for defining patentable subject matter boundaries – known as the threshold test. Under this standard, claims cannot be too abstract or vague to qualify as patentable.

Analysis of patentability is typically based on a specific statutory category of invention. For instance, a claim may not qualify as patentable if it does not describe an innovative and useful process, machine, manufacture, or composition of matter.

However, the statutory categories of patentable subject matter do not include “abstract ideas” and “natural laws/phenomena.” These concepts have been declared patent ineligible under the Supreme Court’s Alice and Mayo decisions.

Courts have often upheld claims claiming ineligible concepts as long as they are limited to a practical application. For instance, an invention such as creating a wireless media stream to a handheld device would not be considered abstract since such technology wasn’t widely available at the time of its invention.

It is also essential to recognize that some patent-ineligible concepts can actually be quite inventive. For instance, in Prometheus, the inventors utilized a correlation between metabolite levels and effective treatment as the foundation of their invention.

Confidentiality of ChatGPT Input

As an AI language model, ChatGPT processes input data in a similar way to a search engine or web browser. This means that it is possible for ChatGPT to retain some information about the input it receives, such as the text of the messages that are entered.

The confidentiality of the input provided to ChatGPT will depend on how the system is used. If you are using a third-party application or service that integrates ChatGPT, it is important to review the terms and conditions and privacy policy of that service to understand how your data is handled and protected. Here, OpenAI’s FAQ states the following:

Can you delete specific prompts?

No, we are not able to delete specific prompts from your history. Please don’t share any sensitive information in your conversations.

OpenAI’s Privacy Policy further states:

Communication Information: If you communicate with us, we may collect your name, contact information, and the contents of any messages you send (“Communication Information”).

This problem with ChatGPT was raised by Aaron Gin and Yuri Levin Schwartz who they stated: “patent attorneys must take care not to disclose confidential information to publicly-accessible large language models like ChatGPT. A court could consider the content of the messages as public disclosure of the invention because OpenAI has no obligation to secrecy. Inadvertent disclosures could result in a loss of patent rights and/or a time bar if a utility application is not filed within one year of the disclosure.”

Potential Risks of Using ChatGPT for Patent Drafting

ChatGPT is an AI text-generation tool that produces remarkably well-written answers to short prompts. What used to take days of effort can now be completed within minutes. It is a promising technology that could simplify patent drafting for attorneys. However, five potential risks should be taken into account before using it for creating patent applications.

1. Section 112 Issues

When drafting claims, providing an express antecedent basis for all claim terms is essential. This ensures that words in the claim are defined precisely so anyone reading it can comprehend its meaning. A claim must also be precise as to which part of the invention it refers to, which may necessitate explanations in the detailed description. For instance, if you use “leg” in a claim, it must be understood whether that refers to either one, two, or all three legs.

2. Section 101, 102, and 103 Issues

the claims need to take into consideration the prior art, and this is where a high-quality prior art search helps. The claims should be checked against such a prior art search.

3. Intellectual Property

Copyright infringement is an issue when training an AI model on copyrighted materials. This area of law is rapidly growing and how it affects patent drafting is still evolving.

4. Confidentiality

As noted patent attorneys should exercise caution when disclosing confidential information to publicly-accessible large language models like ChatGPT, especially before filing a utility application. Any disclosure could be construed as public disclosure of the invention and could lead to loss of patent rights or an involuntary one-year time bar for filing a utility application.

5. Attribution Credit

ChatGPT’s terms of service require that any published content be attributed both to its source and AI generation. This could cause some concern for patent attorneys who wish to avoid attribution credit in their patent applications.

Due to these concerns, ChatGPT may not be the best drafting tool for patent lawyers who want to guarantee that their output is accurate, valid, and enforceable. Therefore, ChatGPT should only be utilized for creating briefs and documents after the patent attorney review.

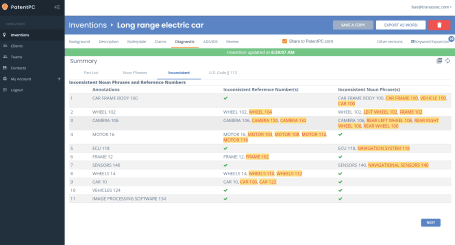

What is needed is a fully flushed-out system such as the PowerPatent system that applies for generative AI assistance with the proper guard rails that meet Section 112 requirements and that will soon provide prior art search to aid patent prosecution. You can get a free trial if you enter your law firm email address.

It’s essential to consider the following risks related to attribution credit:

Lack of explicit authorship

ChatGPT is not explicitly credited with the text it generates. It does so based on patterns that are learned through vast amounts of data. It doesn’t have an author that can be credited with the output. It can be difficult to identify the author of the ideas and language that the model generates due to the lack of an explicit author.

Unclear contribution boundaries

ChatGPT has no clear contribution boundary. It is trained using a variety of text sources including scientific papers, patents, and other publically available content. It can produce content that looks like existing intellectual property or patents. The question arises as to whether the AI model’s output is a direct contribution from the user or a derivative work that uses existing content.

Ethics

In certain jurisdictions, it may not be legal to attribute authorship or invention solely to an AI. In general, patent laws require that a human author or inventor be identified. Failure to correctly attribute credit to an inventor or author can lead to legal complications or challenges against the validity of the Patent.

Ownership and licensing.

The output generated by ChatGPT may be subject to copyrights or other intellectual property rights. According to the licensing or terms of use agreements for the AI model, there may be restrictions or limitations on use, ownership, or attribution.

It is important to follow these steps in order to mitigate the risks of using ChatGPT and similar AI models for patent drafting.

Document the involvement of AI Tools

Include in your patent application a statement that clarifies the role of AI software like ChatGPT during the writing process, and acknowledges their contribution without attributing them authorship or invention.

Collaborative Approach

Involve human authors or inventors in the patent writing process along with the AI model. Delineate clearly the contributions of both the AI and human entities to ensure that proper credit is given.

Consult legal professionals.

Seek advice from attorneys and patent agents who are familiar with AI-generated content in your jurisdiction. They can give guidance on legal requirements and potential challenges.

Conclusion

As an AI language model, ChatGPT can assist in generating patent claims based on the information and instructions one provides. However, it’s important to note that it is not a substitute for a qualified patent attorney or agent. Patent drafting requires careful consideration of legal and technical aspects, as well as an understanding of the specific requirements of the jurisdiction in which you are seeking protection. While ChatGPT can provide general guidance and suggestions, it is always advisable to consult with a legal professional who specializes in patent law to ensure that your claims are accurate, comprehensive, and properly protect your invention. They can review and refine the claims to comply with the relevant patent laws and regulations in your jurisdiction.