Fraud moves fast. Good patents need to move faster. If you are building fraud detection or risk scoring tools, you are not just making software. You are building a system that helps decide who to trust, what to block, when to act, and how to do it in real time. That kind of work can become some of the most valuable IP in your company. But only if the patent is written the right way.

What Makes Fraud Detection and Risk Scoring Patentable

Fraud detection and risk scoring can look simple from the outside. A person applies for something, a system checks the data, and then a score or decision comes out.

But inside that flow, there is often a lot of real invention. For many businesses, the strongest patent value is not in saying that they use AI or that they score risk.

It is in showing exactly how their system reaches better decisions in a faster, smarter, and more reliable way. That is where patentable value often lives.

Patentability Starts With the Business Problem You Solve

Every useful patent starts with a real problem. In fraud and risk systems, the problem is rarely just “detect fraud better.” That is too broad and too easy for others to say too.

The real problem is usually more specific. A company may need to catch account takeovers without blocking good users. A lender may need to score thin-file applicants without raising bad debt.

A payments team may need to stop first-party fraud before chargebacks grow. When the invention is tied to a clear business pain point, the patent story gets much stronger.

A useful way to think about this is to ask what your system changed inside the business. Did it cut false positives? Did it improve speed at checkout? Did it reduce manual review load?

Did it help the company approve more good users while still lowering losses? The more clearly you can link the technical work to a real business result, the easier it becomes to show why your approach matters.

The Best Patent Material Often Hides in Daily Operations

Many teams miss patentable ideas because they only look at the model itself. That is a mistake. Some of the strongest inventions sit in the small operational choices that make the system work in the real world.

A company may have built a special way to combine device data with user history.

Another may route edge cases through a staged review flow that learns from reviewer actions. A third may run different scoring logic based on transaction type, geography, or merchant class.

These are not side details.

They can be the heart of the invention. If your system works better because of how data moves, how signals are filtered, how actions are triggered, or how feedback is reused, that may be exactly what makes it patentable.

Patentable Value Is Usually in the System Design, Not the Buzzwords

A lot of teams describe their product with broad terms like machine learning, predictive analytics, anomaly detection, or trust scoring.

Those words may sound impressive, but they do not create strong patent value by themselves. Patentability usually comes from the design choices under the label.

That means the flow of inputs, transformations, decisions, and outputs.

If your fraud engine takes in raw events, normalizes them, groups them by identity graph, measures a sequence of risk factors, applies a dynamic threshold based on account age, and then triggers different downstream actions based on confidence level, that is far more useful than just saying you use AI to detect fraud.

A patent examiner, a competitor, and an investor all care about the actual mechanism.

Why Generic AI Language Is Not Enough

Many software teams think that adding “AI-based” to an invention makes it stronger. It usually does not. In fraud and risk products, generic AI language often makes the work sound less distinct, not more distinct.

That is because many companies use some kind of model. What matters is what your model does inside a larger decision system and how it solves a hard problem in a concrete way.

A stronger approach is to explain what was difficult before your system existed. Then explain what your system now does that others were not doing.

Maybe it updates risk based on live user behavior inside a session. Maybe it links activity across accounts that look unrelated at first glance. Maybe it adjusts score weights when source data quality drops. Those details turn a vague software story into patent material.

Novelty Often Comes From How You Combine Signals

One of the most common places where patentable value appears is signal design. Fraud systems rarely rely on a single input. They pull from device features, payment patterns, account behavior, location history, network links, merchant activity, document checks, and many other sources.

But simply using many signals is not enough. What can become patentable is how those signals are selected, weighted, grouped, timed, or reconciled.

A company may have found that certain signals only matter in a specific order. Another may assign different importance to the same signal depending on transaction context.

Another may create a confidence layer that measures whether the signal itself can be trusted before the main score uses it. These kinds of designs can be highly valuable because they are tied to better performance in practical settings.

Signal Timing Can Be an Invention

Many founders focus on what signal is used, but not when it is used. Timing matters a great deal in fraud detection and risk scoring. A signal captured during account creation may mean one thing.

The same signal captured during a password reset or card update may mean something else. If your system changes its logic based on event timing, that can be part of the invention.

This becomes even more interesting when the system updates scores during a live flow. For example, if a platform changes a risk score after each new user action in a session and then changes what the user sees next, that is not just static scoring. It is an adaptive decision system.

In many cases, that kind of live score update path is more patent-worthy than the raw score formula.

Cross-Signal Reconciliation Can Create Defensible IP

Real-world data is messy. Fraud systems often face conflicting inputs. One signal may suggest a low-risk user while another points to abuse. A strong invention may live in the way the system resolves that conflict.

It may prioritize certain signals only when trust conditions are met. It may create a temporary hold state until more evidence is gathered. It may split the decision into separate fraud and trust tracks before combining them.

This kind of reconciliation logic is often where businesses win or lose money. It is also where patents can become more defensible, because the system is not merely observing data. It is handling uncertainty in a structured and repeatable way.

Patentability Can Come From What Happens After the Score

Many teams think the score itself is the invention. Sometimes it is. But very often, the real patent value starts after the score is created. That is because the output of a fraud or risk system is rarely just a number.

It usually drives action. Those actions may include blocking a payment, requesting more proof, lowering a transaction limit, sending a case to review, changing user permissions, or watching later behavior more closely.

When your system maps score results into tailored actions in a specific technical way, that can become highly important. A patent can cover not just how the score is calculated, but how it is used to control later system behavior.

Action Routing Is a Strong Claim Angle

A business that wants stronger patent coverage should look hard at its action routing logic. If the platform sends different users into different paths based on both score and context, that can be valuable claim material.

For example, a user with a medium score may be asked for one type of proof on a new device but a different type of proof on a known device. A seller with a rising fraud score may still stay active, but with delayed payouts and tighter limits.

This matters because it ties the score to a practical control system. It shows that the invention is not an abstract idea. It is a real operational engine that changes how the platform behaves.

Feedback Loops Can Be the Real Secret Sauce

A lot of fraud systems get better over time because of feedback. The system learns from confirmed fraud, approved transactions, reviewer decisions, user responses, disputes, losses, and recovery outcomes.

But the patentable value is not just that the system learns. It is how that feedback is captured, filtered, labeled, and pushed back into the decision process.

A strong invention may update threshold values based on case outcomes from a defined segment. It may retrain part of the score logic only when drift passes a certain level.

It may separate noisy feedback from verified feedback before using it. These are not basic housekeeping tasks. They can be meaningful technical steps that help the business stay accurate as fraud patterns change.

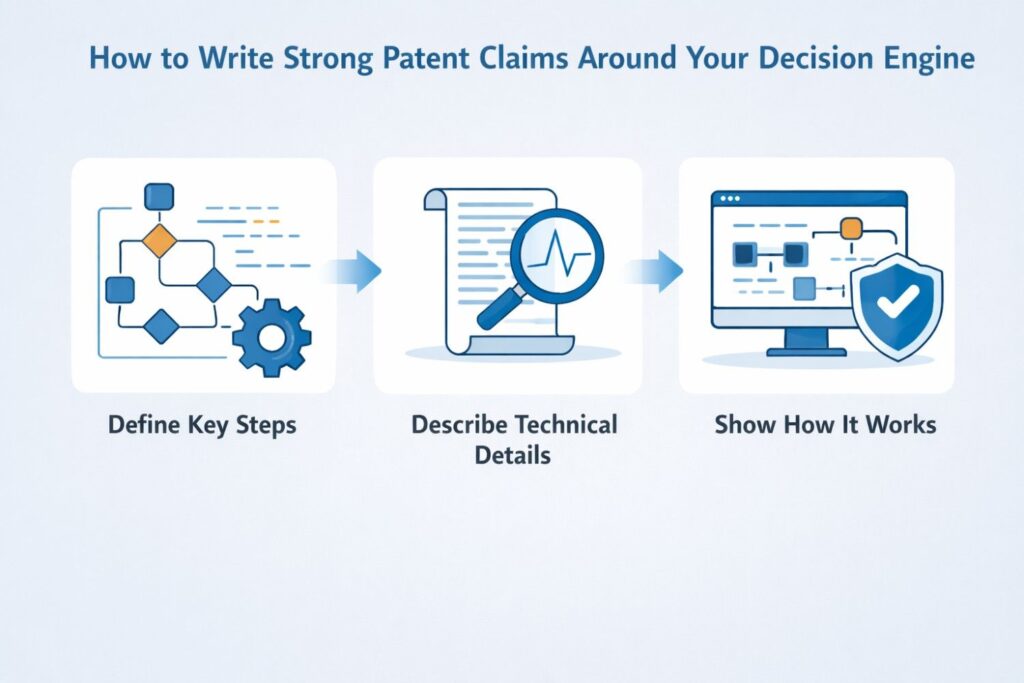

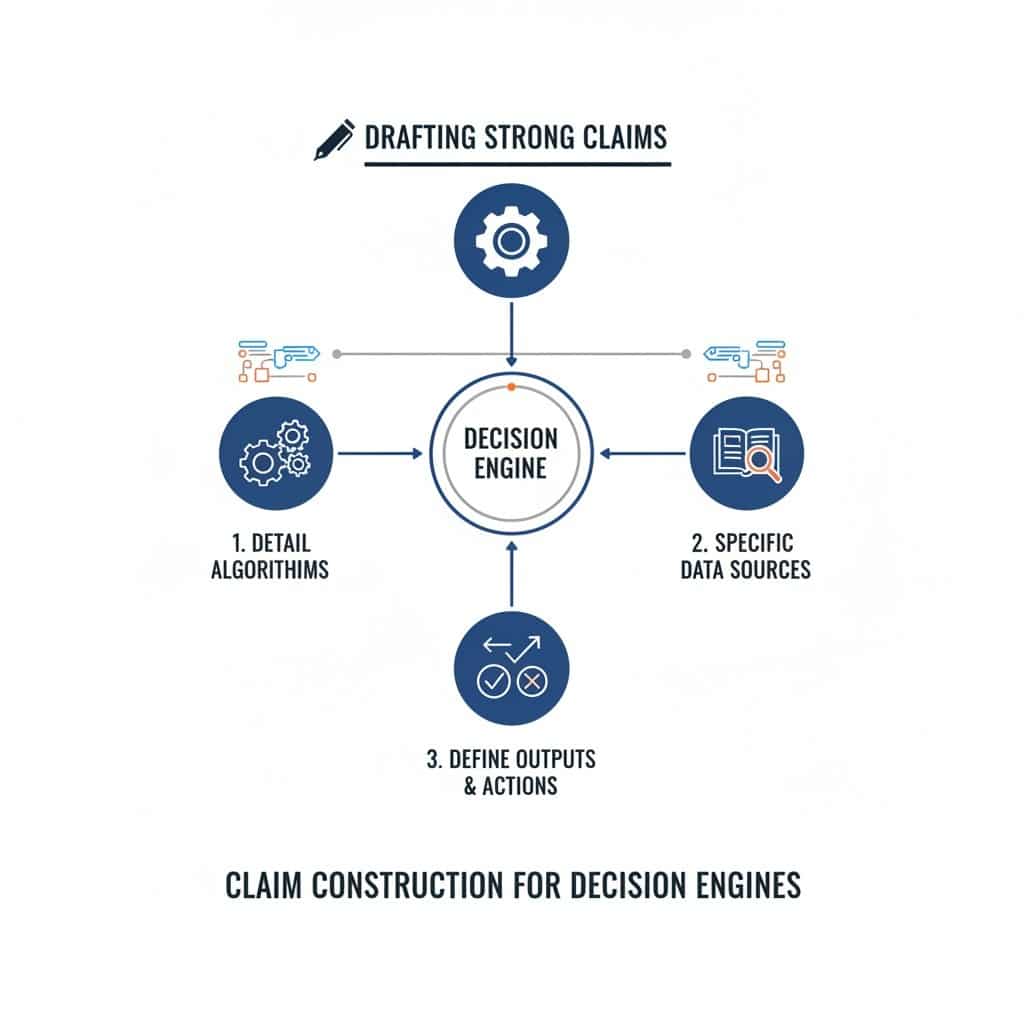

How to Write Strong Patent Claims Around Your Decision Engine

Your decision engine is where your product proves its value. It takes in facts, weighs signals, decides what matters, and triggers the next move. That is not a small thing.

In many fraud detection and risk scoring products, the decision engine is the part that makes the whole business hard to copy. That is why claim drafting around this layer matters so much.

A lot of founders make the same mistake here. They describe the result, but not the path. They say the system scores risk, flags fraud, or blocks suspicious activity. That sounds fine on the surface, but it does not do enough work.

Strong claims usually come from the engine’s inner flow.

They come from how data enters the system, how the system treats that data, how different conditions affect the outcome, and how the final decision changes platform behavior. That is where real protection starts.

Start With the Decision, Not the Feature Name

Many teams begin by naming the feature. They say they built a fraud engine, a trust layer, a risk model, or a rules system. That may help inside the company, but it is not where good claims begin.

A strong claim usually starts with what the engine actually does in a real sequence. It receives one or more inputs, processes them in a defined way, generates a result, and causes some action.

That shift matters because names are easy to copy and easy to work around. Sequences are much harder. If you can describe the decision engine as a living process instead of a product label, you are already much closer to a claim that has real force.

Describe the Motion Inside the Engine

A useful drafting move is to ask what happens first, what happens second, and what happens because of that.

Maybe the engine receives event data tied to a payment attempt, enriches that event with account history, compares the enriched event to linked device behavior, generates a risk score, and then selects an action path based on the score and transaction context.

That is a claimable sequence.

This kind of detail helps because it gives shape to the invention. It turns a broad idea into a working system. It also makes it easier to see which parts are special and which parts are just background.

Claims Should Protect the Logic, Not Just the Outcome

A weak software claim often sounds like this: receive data, analyze data, output a score. That may be roughly true, but it leaves too much room for others to say they are doing something different.

A stronger claim protects the logic inside that middle step. It explains what kind of analysis is happening and how it leads to a particular kind of decision.

This does not mean you have to expose every secret detail. It means you need to anchor the claim in the real mechanics that make your engine better.

If your system uses a staged score path, a context-based threshold, a confidence adjustment, or a rule-to-model handoff, those details may carry far more value than the final score itself.

Good Claims Follow the Real Technical Choice

The best claim material often comes from moments where your team had to make a hard design choice. Maybe you could not rely on one signal, so you built a way to reconcile several signals with different trust levels.

Maybe you had to act in real time, so you split the engine into a fast screen and a deeper secondary check. Maybe you needed to avoid user friction, so the engine selected actions based on both risk and recovery potential.

Those decisions are useful because they show what your engine actually solved. A claim built around a real technical choice is usually much stronger than one built around a broad business goal.

Frame the Engine as a System That Changes Behavior

Many software patents become weak because they stop at data analysis. But fraud and risk engines do not exist just to think. They exist to change what happens next.

They approve, deny, delay, challenge, escalate, suppress, or watch. That behavior change is important.

When you draft claims around your decision engine, you want to show that the engine controls a later system action in a concrete way.

That makes the invention feel less abstract and more like a working technical process. It also gives you better ground if you ever need to show that another product is doing the same thing.

Tie the Decision to a Platform Response

A practical way to strengthen claim language is to connect the engine’s output to a direct response inside the platform. For example, instead of stopping at “generate a risk score,” go further.

Explain that the system uses the score to place the event into one of several response classes, each with a different next action. The engine might allow a transaction, ask for proof, queue a case for review, or limit an account feature.

This move matters because it ties analysis to control. That is often where the real commercial value sits.

Write Claims Around Inputs That Matter in Combination

Fraud and risk systems rarely succeed because they use one input. They succeed because they combine inputs in a smart way. Strong claims often reflect that.

The key is not to dump every possible signal into the claim. The key is to identify combinations that actually drive better decisions.

If your engine becomes more accurate because it uses event data together with account age, linked identity behavior, and trust quality of the source signal, that combination may deserve attention.

The magic is often not in the existence of each signal. It is in the way they work together inside the engine.

Avoid the Trap of Generic Input Buckets

One common drafting mistake is to use vague phrases like “receiving user data” or “analyzing transaction information.” Those phrases are too loose to do much work.

Better claim language usually reflects the role of the inputs, not just their category.

For example, data associated with a current event, historical activity associated with an account identifier, and cross-account linkage information tied to a shared device each play different roles.

This makes the claim more concrete. It also helps show why the engine reaches a stronger result than a simpler system would.

Separate Raw Data From Derived Signals

A smart decision engine often does not act on raw data alone. It creates intermediate signals first.

That is a useful drafting angle. If the engine transforms incoming data into one or more derived indicators before making a final decision, that transformation path can become a very strong part of the claim story.

For example, a system might receive login attempts, derive a session anomaly score, combine that with a prior trust profile, and then generate an action decision.

In that kind of setup, the derived indicator is doing important work. It may be one of the reasons the engine performs better than a basic rule set.

Intermediate States Can Make Claims More Defensible

A lot of founders overlook intermediate states because they seem like internal plumbing. In practice, they can be highly valuable.

If your system creates a temporary suspicion state, a confidence flag, a segment-specific score, or a challenge readiness value before taking action, those steps can help define what is unique.

Intermediate states make claims harder to evade because they reflect the engine’s internal logic.

A competitor may try to avoid your exact score formula, but if they use the same staged logic and same internal state transitions, your claims may still matter.

Claim the Sequence That Improves the Trade-Off

Fraud engines are full of trade-offs. More strict logic can stop more bad activity but hurt good users. Faster decisions can improve conversion but reduce depth.

Stronger review paths can improve trust but increase cost. The best decision engines do not remove trade-offs completely. They improve them.

That is why one of the strongest claim strategies is to focus on the engine sequence that improves a known trade-off.

If your system lowers false positives without weakening fraud capture, or raises approval rates without raising loss, the path that creates that gain may be exactly what the claims should protect.

Business Gains Should Point You Back to Technical Steps

A useful internal exercise is to start with the gain and then walk backward. Ask what exact engine behavior created that result. Did the engine compare a live event against a rolling profile instead of a static rule set.

Did it delay a hard block until after one more verification layer. Did it treat new and mature accounts differently. Did it adjust score interpretation based on source confidence.

The patent claim should usually live in those technical steps, not in the business number alone. The business result helps you identify the important part, but the claim needs the mechanism.

Use Different Claim Angles Around the Same Engine

A strong patent strategy does not describe the decision engine in only one way. The same engine can often support several different claim angles. One angle may focus on how data is gathered and transformed.

Another may focus on the scoring path. Another may focus on action routing. Another may focus on feedback updating or model refresh conditions.

This matters because different claim angles create different layers of protection. If one angle turns out to be too broad or too close to prior work, another may still hold up well.

For businesses, this means the goal is not to find one perfect sentence. The goal is to map the engine from several sides and capture its strongest moving parts.

The Broad Idea and the Narrow Detail Should Work Together

At the claim drafting stage, you usually want both reach and support. A broader framing may describe the engine at the system level.

Narrower framing may capture very specific steps that give the broader claim support and depth.

That balance matters because the broader story helps cover more territory, while the narrower story often gives the invention a stronger backbone.

For a founder, the lesson is simple. Do not stop at the first description of your engine. Push further.

Describe the overall flow, then describe the special conditions, then describe the fallback path, then describe what happens when confidence is low or latency matters. That is how stronger claims begin to take shape.

Common Claim Drafting Mistakes That Leave Fraud IP Exposed

Fraud detection and risk scoring teams often think the hard part is building the product. In truth, that is only half the job. The other half is protecting what makes the product work.

This is where many strong companies lose ground. They build a real edge, but their patent claims fail to capture it. The result is painful. The patent may exist on paper, but the core value of the system is still easy for others to work around.

That risk is even higher in fraud and risk products because the space moves fast and many systems sound similar from the outside.

Two companies may both say they use machine learning, behavior signals, device data, and real-time scoring.

But the inner design can be very different. If the claims stay broad, vague, or disconnected from the real system, that difference may never get protected.

Mistake One: Describing the Goal Instead of the Mechanism

A very common drafting mistake is to write claims around the business goal rather than the actual technical path.

The claim says the system detects fraud, assigns a risk score, or prevents bad activity. That may sound useful at first, but it usually does not go far enough.

Almost every product in the space tries to do those things. What matters is how your system gets there.

A stronger patent claim does not stop at the result. It explains the sequence that produces the result. It shows what data is received, how it is processed, what intermediate steps matter, and what action follows.

Without that detail, the claim often leaves open too many easy ways for others to say they are doing something different while still copying the value of your product.

Why this mistake is so costly

When a claim focuses only on the goal, it usually becomes too thin. It may be hard to defend, hard to distinguish from prior work, and hard to use against a competitor.

This is not just a legal issue. It is a business issue. You can spend time and money on filing, yet still fail to lock down the part of the stack that creates margin, trust, and growth.

What businesses should do instead

A practical fix is to write down the exact path from signal to action. Do not start with “we detect fraud.”

Start with “the system receives a transaction event, enriches it with linked account behavior, calculates a confidence-adjusted risk value, and selects one of several response paths.”

That kind of wording gets much closer to the true engine. It also forces the team to identify what is really different about the product.

Mistake Two: Hiding Behind Generic AI Language

Another common error is leaning too hard on broad AI terms. Companies say they use artificial intelligence, machine learning, predictive analysis, anomaly detection, or adaptive scoring.

Those labels may sound modern, but they rarely protect the invention by themselves. In many cases, they actually make the patent weaker because they blur the real design choices that matter.

In fraud products, generic AI language is especially risky because so many systems already use some form of model. The claim needs to show what the model is doing inside the larger decision framework.

If the patent never explains how the system selects signals, adjusts thresholds, handles timing, resolves conflicts, or controls later actions, then the AI language adds very little value.

Why vague model language leaves room for copycats

A competitor does not need to copy your exact model to copy your business advantage.

They may use a different algorithm but still mimic the same workflow, the same branching logic, the same feedback path, or the same response control system.

If your claims focus only on the existence of AI, they may miss the real place where others can clone your value.

The better way to frame the invention

The stronger move is to treat the model as one part of a larger machine. Describe how the model fits into the flow.

Explain what goes into it, what comes out, what other system rules affect it, and what the platform does with the result. That makes the patent more grounded and more useful.

Mistake Three: Claiming the Score but Not the Action

Many teams treat the score as the end of the story. They draft around generating a fraud score or a risk value and stop there. But in real products, the score is rarely the full invention.

The real value often comes from how that score changes what happens next. That next step may be approval, denial, review, delayed payout, added proof, session monitoring, or account restriction.

If the claims fail to cover what the system actually controls after scoring, the company may miss one of the most valuable parts of its IP.

A rival can often redesign the scoring layer while keeping the action logic that drives business results. That is why this mistake can leave a lot of real product value exposed.

Why action control often matters more than the number

A score on its own is just a signal. Its commercial impact comes from the response it triggers.

In fraud and risk systems, that response often determines user friction, loss prevention, review cost, and conversion.

If the invention includes a special way of routing actions based on score plus context, that may be far more important than the score formula itself.

A more strategic claim focus

Businesses should look closely at the response engine tied to the score. Does the platform choose different proof requests for different user states. Does it lower limits for one group while escalating another.

Does it hold activity temporarily until a second condition is met. These details can produce strong claims because they tie the invention to clear platform behavior.

Mistake Four: Ignoring the Importance of Intermediate States

A lot of patent drafts jump from raw data to final decision too quickly. They skip over the middle. But the middle is often where the best invention sits. Fraud systems commonly create internal states before the final output.

They may generate suspicion indicators, confidence values, segment-specific signals, or provisional risk states. These steps can be central to why the engine performs well.

When those intermediate layers are left out, the claims often become too shallow. They describe a simple pipeline, even though the actual system is far more thoughtful and structured. That creates unnecessary weakness.

Why the middle layer deserves protection

Intermediate states often capture the real craft of the system. They show how the engine breaks down a hard problem into smaller parts before acting.

This is often where uncertainty gets handled, conflicting data gets sorted, and downstream actions get shaped. If that layer is ignored, the patent may fail to reflect the company’s real edge.

A useful drafting habit

When preparing material for patent work, teams should ask what temporary values, internal flags, or stage-based outputs exist before the final action.

Those items may look ordinary to engineers because they see them every day. But from a patent point of view, they may be some of the most valuable details in the whole system.

Mistake Five: Treating All Inputs as If They Matter the Same Way

Fraud detection systems often use many signals, but not all signals play the same role. Some are highly trusted.

Some are weak but still helpful in context. Some only matter at one point in a user journey. Some matter more when paired with another signal. A weak patent draft often hides this nuance by using broad phrases like user data, transaction information, or behavioral signals.

That flattening creates problems. It fails to show what makes the engine smart. It also gives up a chance to protect the special combinations and conditions that create better performance.

Why signal handling is often where the invention lives

In many businesses, the system advantage comes from how signals are chosen, weighted, sequenced, and interpreted. One company may rely heavily on device trust during account recovery.

Another may use network linkage only after a trigger event. Another may adjust weight based on source reliability. These are not minor details. They often drive the real results.

What a smarter drafting approach looks like

A better approach is to describe the role of the inputs, not just their category.

Explain which signals relate to the current event, which represent past behavior, which come from linked accounts, and which are used to measure confidence in other signals. This makes the invention more concrete and much harder to reduce to generic software language.

Mistake Six: Failing to Claim the Branching Logic

Decision engines are powerful because they do not always do the same thing. They branch.

They respond differently based on account age, event type, confidence level, device status, transaction size, or prior outcomes. Yet many claim drafts flatten the system into one straight path. That is a major mistake.

When branching logic is omitted, the claim often misses the adaptive nature of the engine. It describes the system as if it were static when the real value may come from how it changes behavior in different situations.

Why branching is often the true source of product strength

A fixed process is easier to copy because it is simple. A conditional process is harder to copy because it reflects hard-earned business learning. It shows that the company did not just build a score.

It built a system that knows when to use one path instead of another. That flexibility can be one of the most important parts of the IP.

How to surface branching in patent content

A practical way to find claim-worthy branching is to map every point where the system chooses between paths. Ask what condition causes the branch. Ask what changes on each side.

Ask why that split improves speed, safety, accuracy, or user experience. Those answers often reveal claim language that is far stronger than a single linear description.

Mistake Seven: Leaving Out Time and Sequence

Timing matters a lot in fraud detection and risk scoring. The same signal may mean very different things depending on when it appears. The order of events may matter. The score may update during a live flow.

The platform may apply a fast screen first and a deeper review later. If the patent ignores timing, it may ignore one of the sharpest parts of the invention.

This mistake is easy to make because timing can feel like an implementation detail. But in many businesses, timing is exactly what creates the edge. It can improve speed, reduce cost, lower friction, and sharpen risk decisions at once.

Why sequence can be patent-worthy on its own

A system that checks ten signals all at once is different from a system that checks three first, then pulls more only if needed.

A system that updates risk during a session is different from one that scores only at the start. A system that changes thresholds after a trigger event is different from one that keeps them fixed. These timing choices can be meaningful invention points.

How to make time visible in the draft

Teams should explain when each step happens and why. Does the system enrich data before or after linkage. Does it challenge the user before or after scoring.

Does it recalculate after a new action. Does it delay final action until a confidence threshold is crossed. These details help turn an abstract process into a real operating system.

Mistake Eight: Overlooking the Feedback Loop

Fraud systems often improve through feedback, but many patent drafts talk about this in a weak and blurry way. They say the system learns from outcomes or updates over time.

That is not enough. The useful invention is often in the details of how feedback enters the system, how it gets filtered, what counts as reliable, and what part of the decision framework it changes.

When those details are missing, the patent may fail to protect the adaptive engine that makes the product better month after month. That is a major missed chance for businesses whose edge grows with use.

Why vague learning claims usually underdeliver

Almost every modern software company can say its product gets better from data.

That statement alone does not create much distance from the field. The stronger position comes from showing how your system treats different outcomes differently, how it updates only certain thresholds or paths, or how it separates strong feedback from noisy feedback before making changes.

A more practical way to draft around feedback

A smart drafting exercise is to map the full route of one outcome. If a fraud case is confirmed, what happens next inside the system. Which values get updated. Which paths become more strict.

Which segment rules change. Which actions are not changed because the evidence is weak. That level of detail can create far more durable claim support.

Mistake Nine: Drafting Too Broad Too Early

Founders often want broad patents. That instinct makes sense. They want strong coverage and room to grow.

But many teams reach for broad language before they have anchored the invention in a concrete use case. The result is often weak drafting that sounds large but says very little.

This is especially dangerous in fraud and risk products because the space is already full of broad language. If the claim sounds like it could describe dozens of existing systems, it may not protect much of anything.

Wrapping It Up

Fraud detection and risk scoring can create serious business value. But value alone is not enough. If your patent claims do not cover the real engine behind that value, your best ideas can stay exposed. That is the core lesson here. Strong protection does not come from saying you use AI, machine learning, or smart scoring. It comes from showing how your system actually works. It comes from the flow of data, the way signals are combined, the timing of the decisions, the actions triggered after scoring, and the feedback loops that keep the system sharp over time.